Documentation Index

Fetch the complete documentation index at: https://www.truefoundry.com/llms.txt

Use this file to discover all available pages before exploring further.

Updates

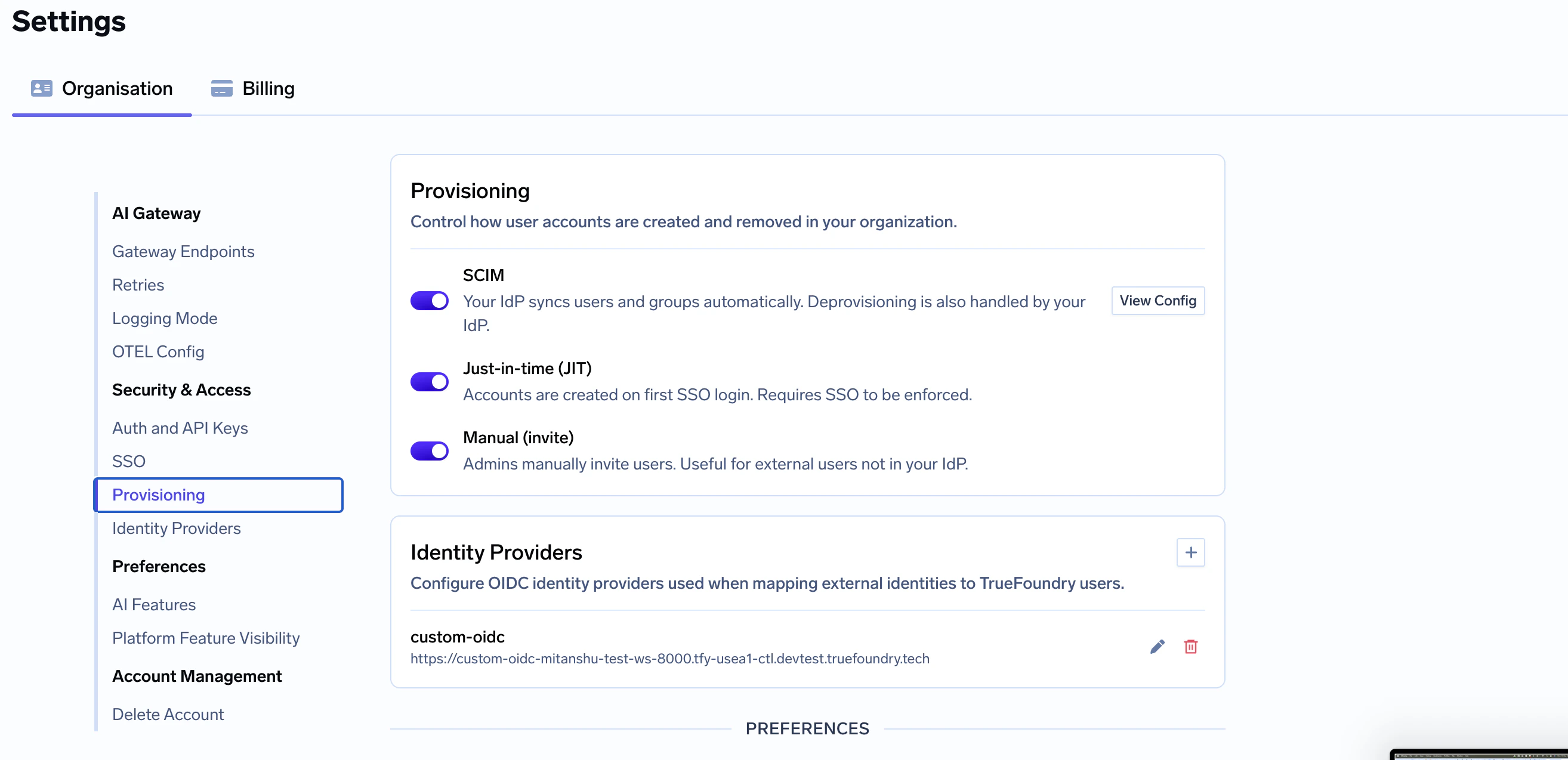

- A new Provisioning settings (

settings/provisioning) is available for configuring SCIM, JIT, and manual user provisioning modes. You can learn more about it here

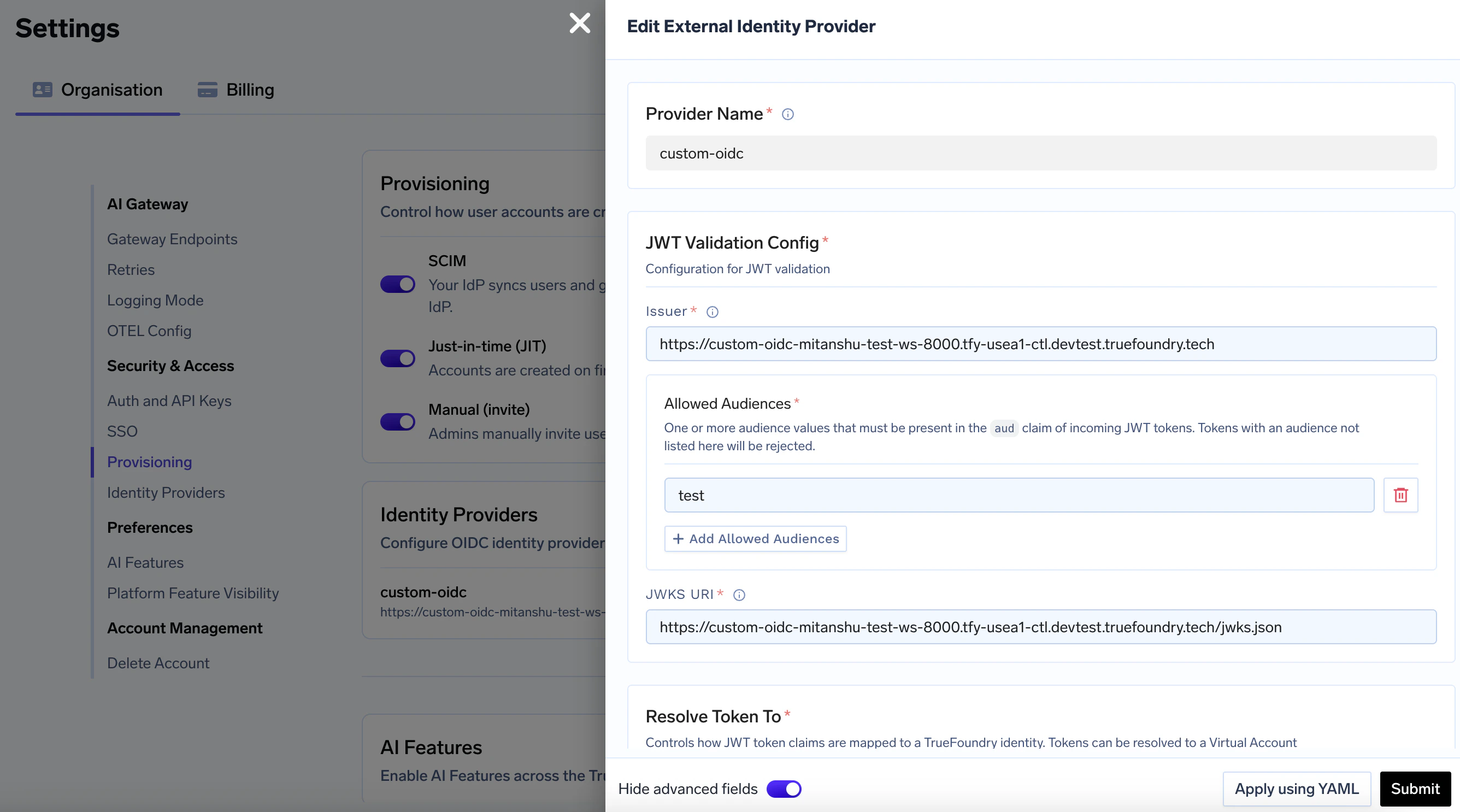

- Identity Providers configuration has been consolidated. A new Identity Providers section is available under Organization Settings. You can learn more about it here

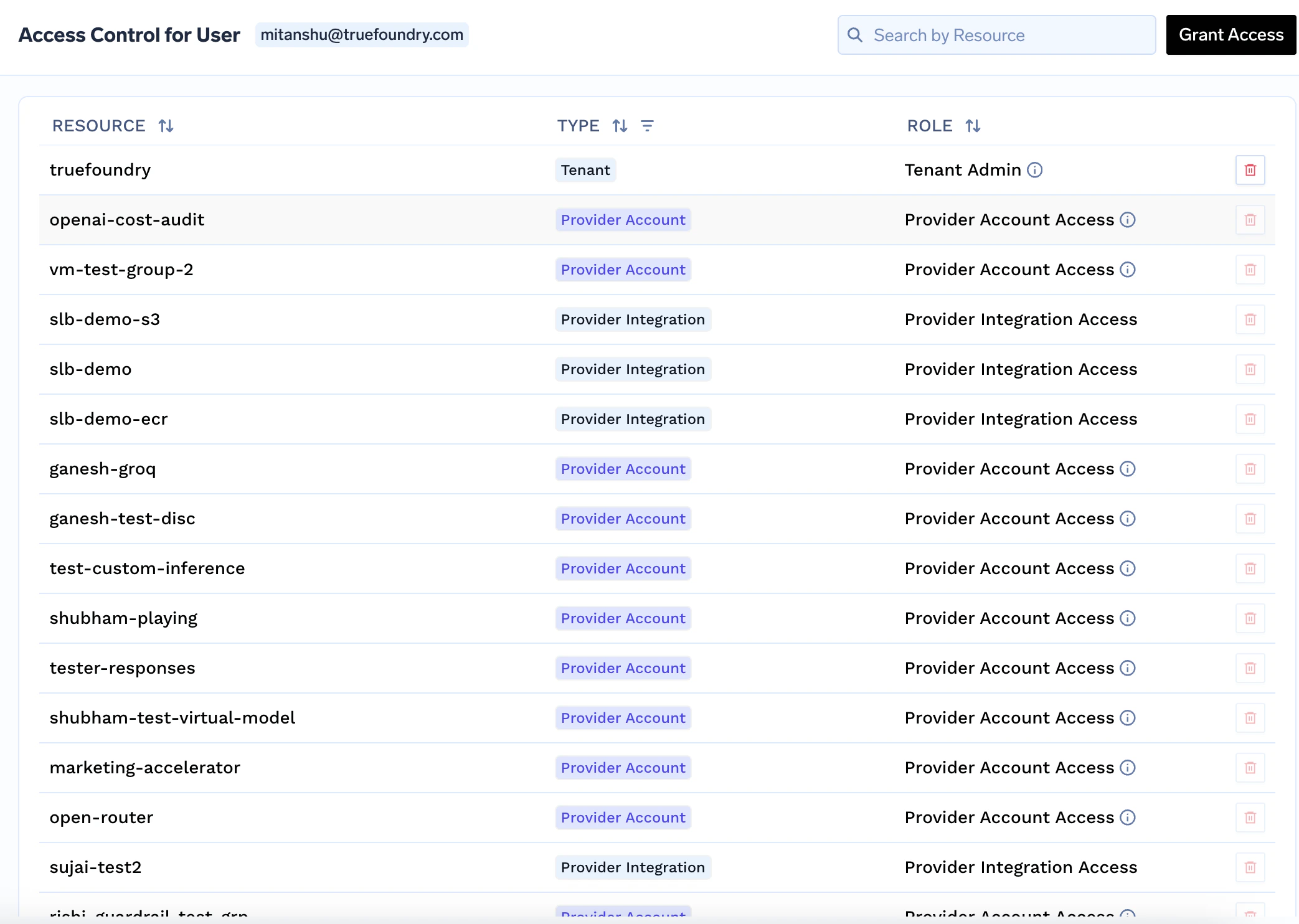

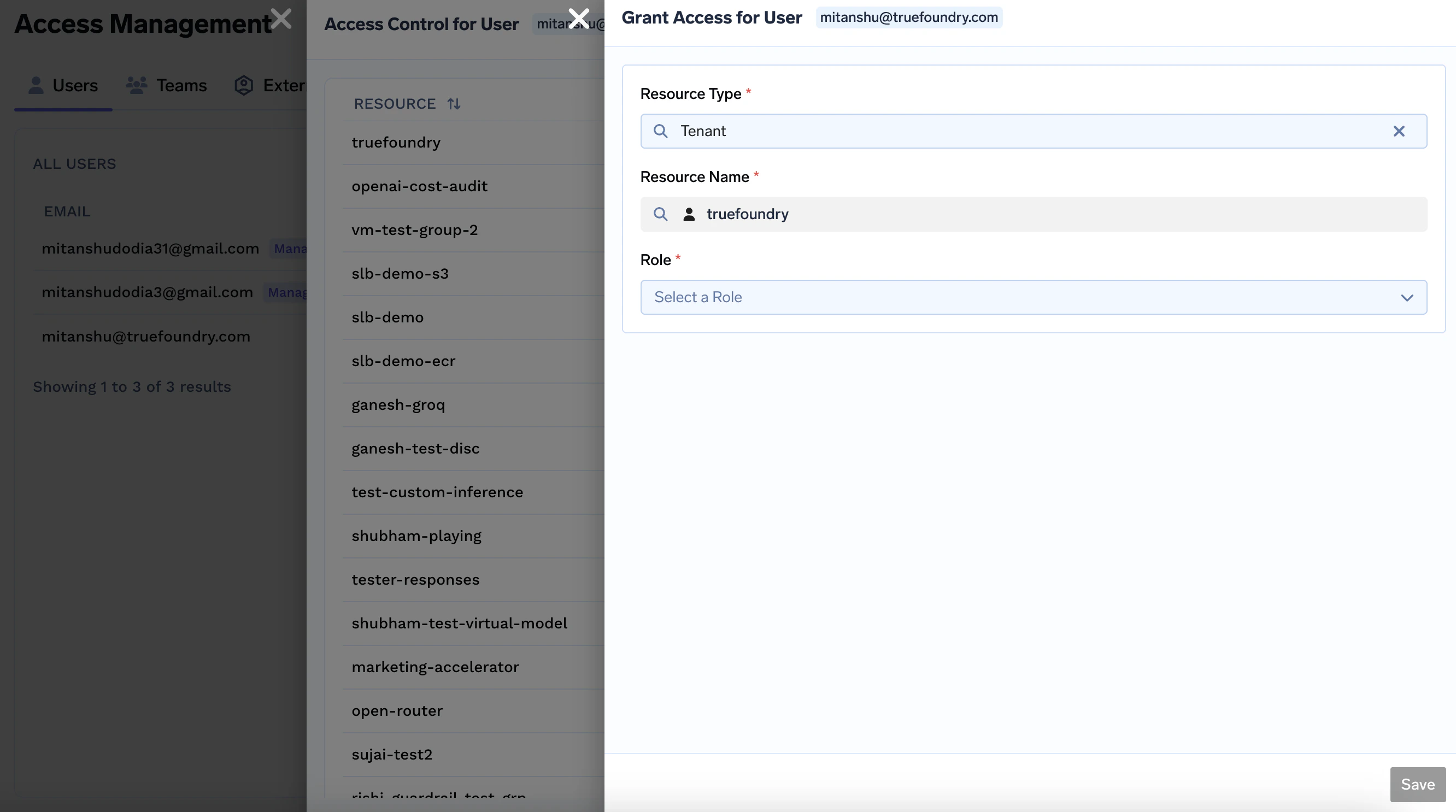

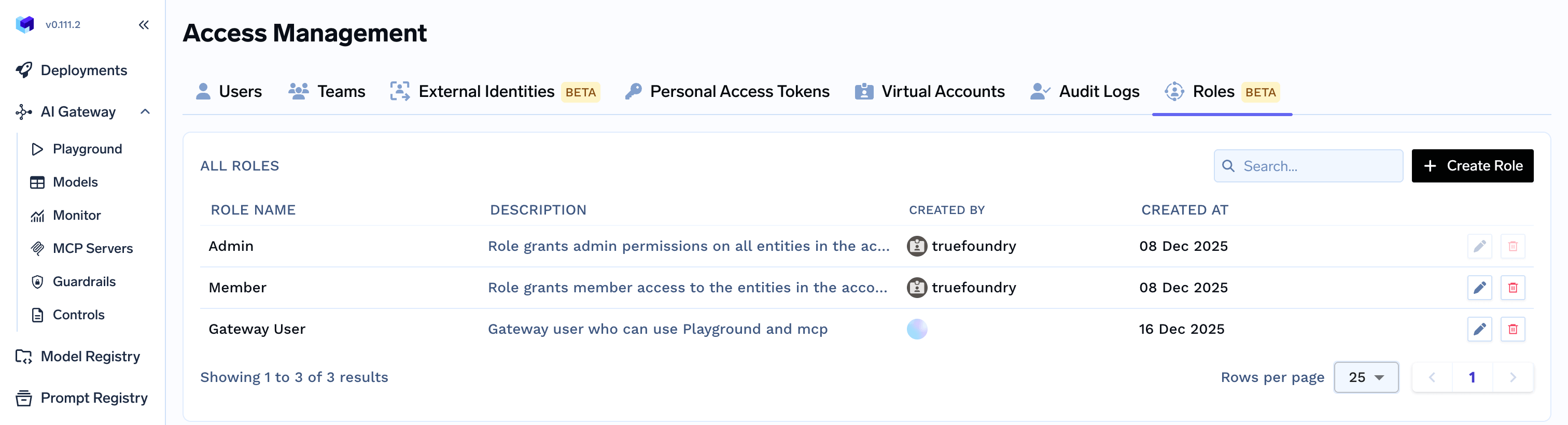

- A new access control feature is available where you can see all the permission a user has access to and also you can Grant and revoke Access to Users using this feature.

- To grant/update access to Users, you can click on Access Control button -> Grant Access button on top right corner -> Select Role.

- You can now assign multiple roles to a User.

- You can now also assign roles to a Team.

AI Gateway

- Agents now support file uploads in user messages.

- Skills registry is now available, allowing you to register and manage agent skills on the platform.You can learn more about it here

- Tenant-scoped global guardrail settings are now available, allowing operators to configure a default guardrail timeout and whether input guardrails run in parallel with model execution.

- Image-modality token usage is now tracked and priced separately from text tokens across Gemini/Vertex and image endpoints.

- Client disconnects during inference now short-circuit load-balancing retries and return HTTP 499 instead of falling through to additional targets.

- Bedrock proxy errors now propagate the real upstream HTTP status code instead of always returning 500.

- Identity provider token resolution now supports mapping external JWTs to TrueFoundry users or virtual accounts based on configured claim rules, with team membership derived from JWT claims.

- Support for Crowdstrike AIDR guardrail integration is now available, replacing the deprecated Pangea integration, Existing Pangea guardrail configurations must be migrated to CrowdStrike AIDR. You can learn more about it here

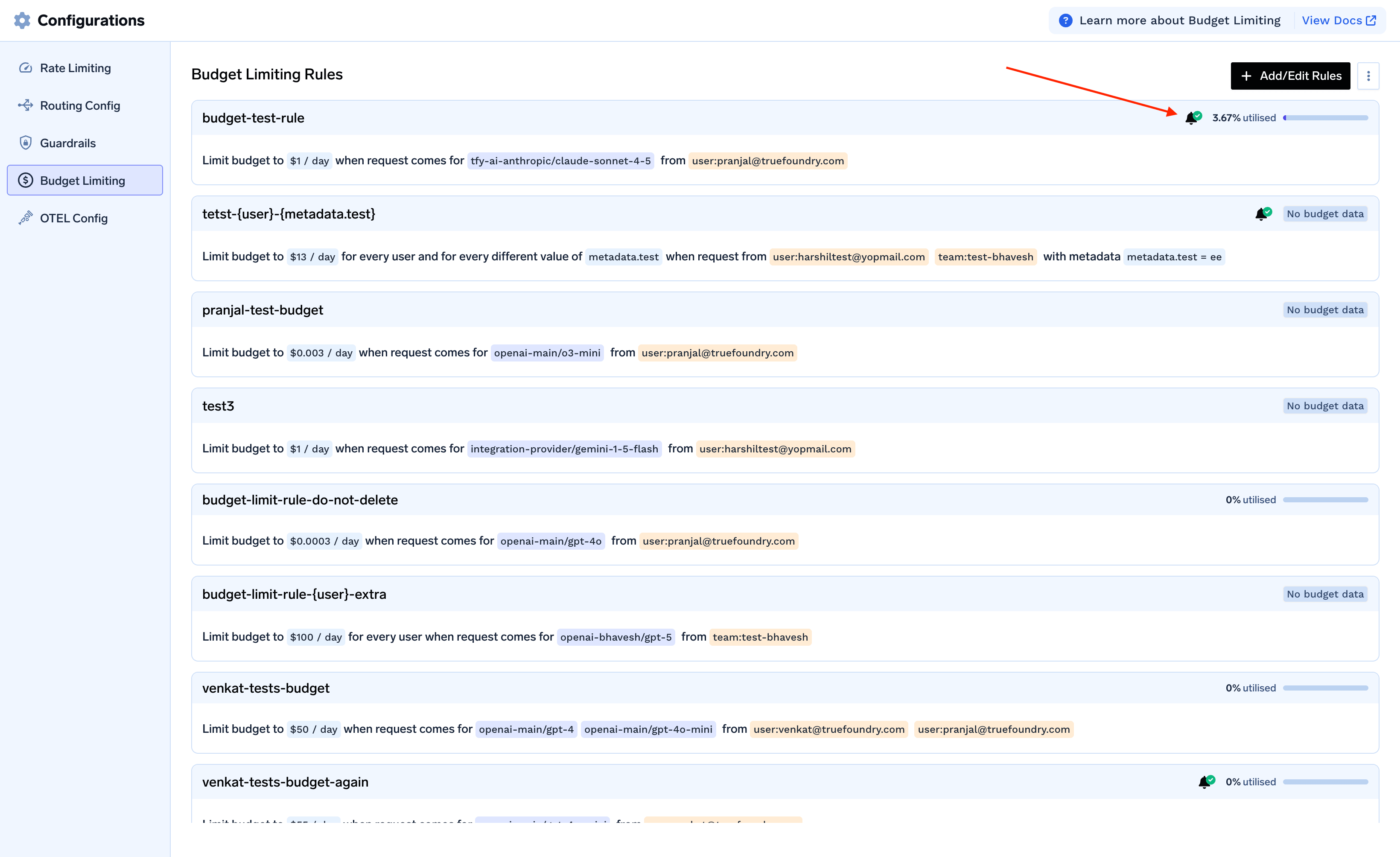

- Quarterly budget limits are now supported for gateway cost enforcement, in addition to existing daily and monthly windows.

AI Engineering

- EKS 1.35 is now supported for new AWS cluster provisioning and is the new default Kubernetes version.

- Custom Docker registry integrations now accept hostnames with optional port numbers and support HTTP-based registries.

- The model weight validation for deployment now accepts any file ending in

.safetensors, broadening compatibility with additional HuggingFace model layouts. - Spark Notebook is available as a new workbench type, with support for configuring driver/executor resources, scaling mode, and Spark Connect. This feature is gated behind a feature flag.

- Human-in-the-loop signal support is now available for Flyte workflow executions, allowing users to list, approve, and set signals on running workflows.

Release instructions

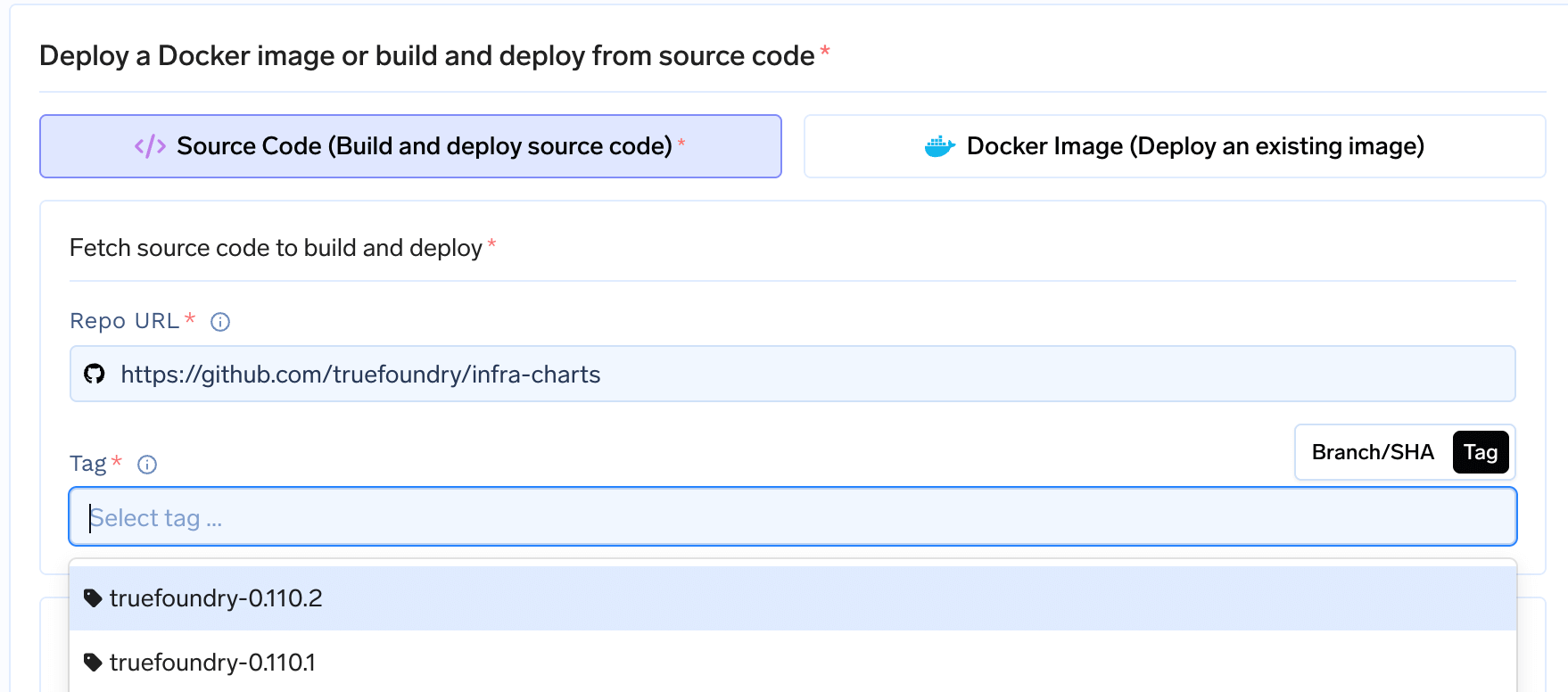

- Update the

truefoundryHelm chart to version0.144.0. - Update the

tfy-llm-gatewaychart to version0.144.0.

Updates

AI Gateway

- Guardrails now inspect and moderate tool call inputs and tool result outputs (across Bedrock, Gemini, and Messages adapters), not just visible text content. Blocking verdicts correctly prevent content from leaking through the mutation/redaction path.

- Guardrail plugins in audit mode now consistently emit

audit_mode_flagin traces instead of silently passing, and multiple plugins have been aligned to a unified verdict and enforcement model. - AWS Bedrock guardrail violation messages now include topic policy hits alongside existing word, content, and PII policy violations.

- Anthropic

anthropic_betaheaders are now forwarded to Bedrock and Vertex Anthropic Messages requests, with a configurable Bedrock-side allowlist (BEDROCK_ANTHROPIC_BETA_ALLOWLIST) that filters unsupported tokens. - Virtual Model name validation now allows uppercase characters and names up to 64 characters.

Release instructions

- Update the

truefoundryHelm chart to version0.142.3. - Update the

tfy-llm-gatewaychart to version0.142.3.

Updates

AI Gateway

- Custom Endpoints provider is now supported, enabling you to bring your own inference endpoints as a first-class provider type in the AI Gateway with full access control and model management.You can learn more about it here

- Google Vertex AI now supports multi-region endpoints — you can set the Vertex region to

us,eu, orglobalfor both provider accounts and per-model integrations. - Smallest AI (Waves) is now available as a TTS and STT provider, supporting text-to-speech and speech-to-text proxy requests with API-key authentication.

- Vertex AI embeddings for specific Gemini embedding models now route to the

:embedContentAPI instead of:predict, with support for multimodal embedding inputs (text, image, video).

AI Engineering

- Snowflake task support is now available in Flyte workflows, allowing you to configure and run Snowflake SQL tasks alongside existing container and Spark tasks.

Release instructions

- Update the

truefoundryHelm chart to version0.141.1. - Update the

tfy-llm-gatewaychart to version0.141.1. - An option to disable the security context for all TrueFoundry components has been added. This is useful for clusters with restricted Security Context Constraints (e.g. OpenShift). See How to deploy on OpenShift with restricted SCCs for details.

- The default security context for NATS has been removed. If your cluster requires a security context for NATS, you must now pass it explicitly via your Helm values:

Updates

AI Gateway

- Google Vertex provider accounts now support Workload Identity Federation (WIF) as an alternative to service-account key files.

- Bedrock Nova embeddings are now supported, including text and base64 image inputs with a dedicated response transformer.

- Structured output handling for Azure OpenAI models is fixed by resolving the effective foundation model name before checking

json_schemaresponse format support. - Computer-use tool definitions are now translated to Gemini’s native format for both Google AI Studio and Vertex AI chat completions.

- Guardrail policies can now target individual MCP tools using

server:toolidentifiers in themcpServersfield, enabling fine-grained guardrail scoping. - End-to-end request tracing now covers all provider operation endpoints including batches, files, fine-tuning, image generation, and moderation.

- For Claude Code Max, TrueFoundry Anthropic provider accounts must omit the API key entirely instead of using a dummy key.You can learn more about it here

- OpenTelemetry metrics export is now enabled by default for the gateway. Operators who do not want OTLP metrics emission should explicitly disable

ENABLE_OTEL_METRICS_EXPORTER. - MCP elicitation is now supported for remote MCP servers — the gateway can forward JSON-RPC response payloads (not just requests) upstream.

- The OpenAI Responses API is now supported as a new inference type, with routing, model validation, and code snippet generation available for both streaming and non-streaming modes.

- Virtual Model

model_typesare now restricted tochat,completion,embedding,rerank, andmoderation. Existing configs using other types (e.g.realtime) will need to be updated. - Added support for automatically deploying stdio-based MCP servers

AI Engineering

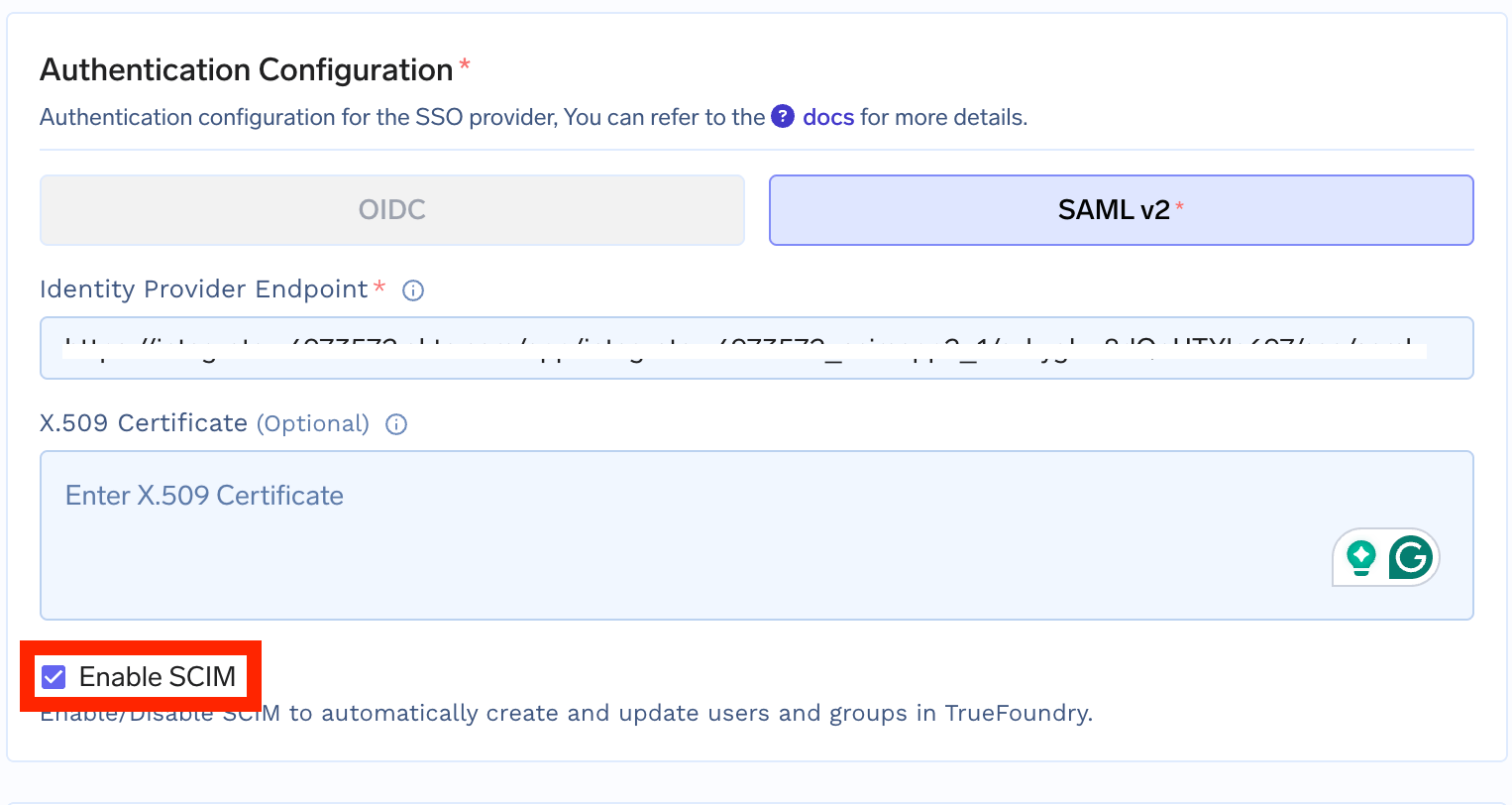

- SCIM provisioning is now supported for OIDC identity providers via a new

enable_scimtoggle in SSO settings, with improved RFC compliance across all SCIM v2 API responses. - The Kubecost addon has been removed from cluster infrastructure configuration across all cloud providers.

- GCP Workload Identity Federation is now supported for trace and log storage backends, enabling keyless authentication for GCS-backed Delta tables.

- Slack webhook is now supported as a notification target for alert rules, alongside existing Email, Slack Bot, and PagerDuty options.

Release instructions

- Update the

truefoundryHelm chart to version0.139.4. - Update the

tfy-llm-gatewaychart to version0.139.2.

Updates

AI Gateway

- Guardrails now support targeting individual MCP tools per server, allowing fine-grained control.

- Gateway provider responses now include a

providerfield indicating which upstream provider served the request.

AI Engineering

- Spark jobs no longer set an explicit

memoryOverhead: 0M, allowing Spark operator defaults to apply for driver and executor memory sizing. - External identity authentication now supports nested/dotted JWT claim paths for extracting

unique_id_claimandemail_claimfrom token payloads. - Trace search has been optimized and made more efficient and faster.

- Dry-run apply requests now skip activity logging.

Release instructions

- Update the

truefoundryHelm chart to version0.136.6. - Update the

tfy-llm-gatewaychart to version0.136.1. - If you’re using

tfy-logsfor Logging, removeretentionMaxDiskUsagePercentfrom Values. Go to Deployment > Helm > Tfy-logs > Edit Values and delete theretentionMaxDiskUsagePercentfield.

Updates

AI Gateway

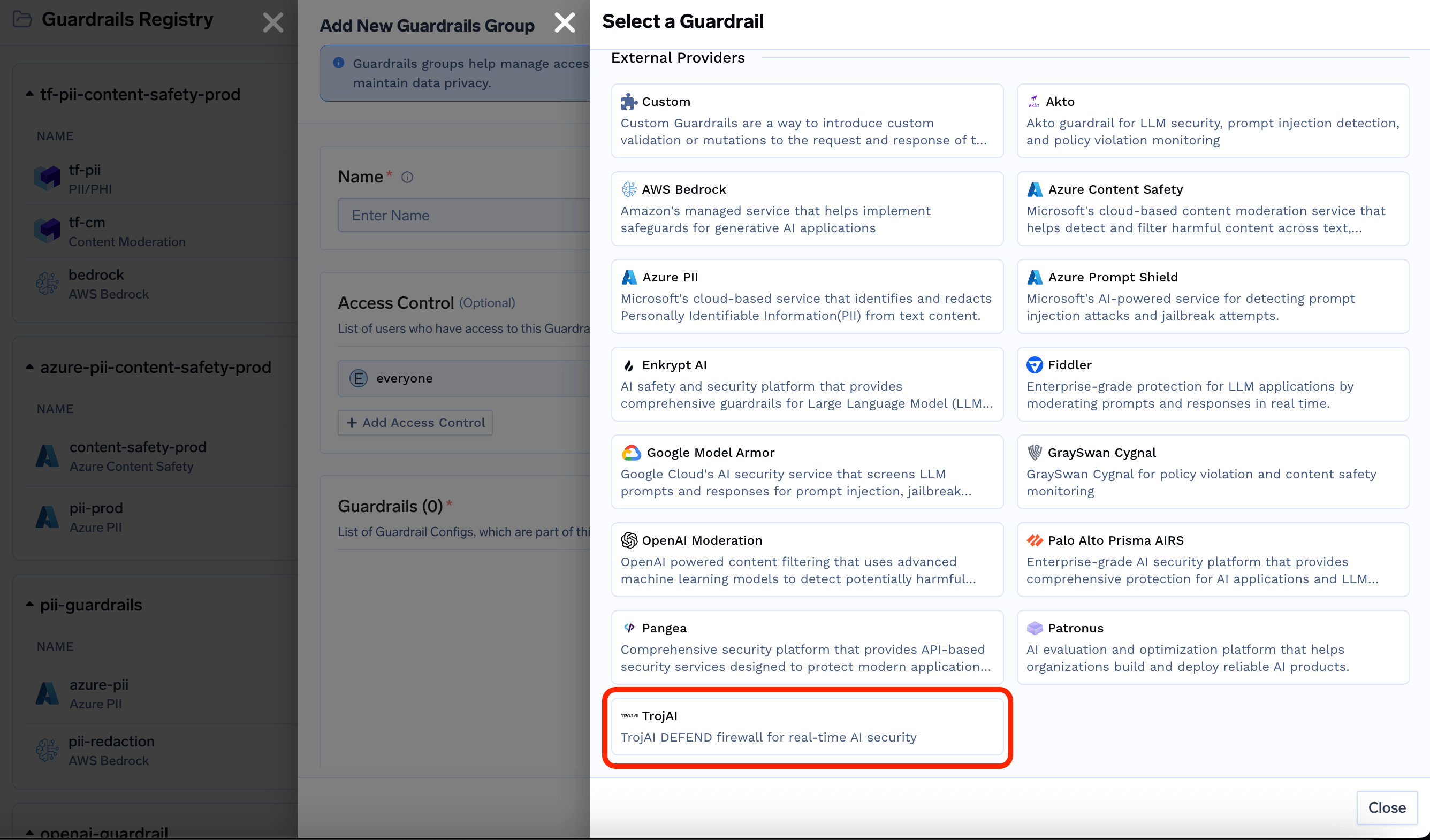

- Added TrojAI DEFEND as a new guardrail integration, supporting both blocking (validate) and content-sanitizing (mutate) modes via the TrojAI firewall API.

- Azure AI Foundry now supports audio endpoints for text-to-speech and speech-to-text operations.

- DeepInfra embeddings are now supported through the gateway.

- PagerDuty is now available as a notification target for gateway budget alerts.

- Google Gemini and Vertex AI token accounting now correctly includes thinking/reasoning tokens in completion token counts for accurate cost calculations.

AI Engineering

- Added an Access Control drawer throughout the platform, providing visibility into who has what permissions on any resource.

- Team names now allow uppercase letters and underscores.

Release instructions

- Update the

truefoundryHelm chart to version0.135.4.

- Update the

tfy-llm-gatewaychart to version0.135.0.

Updates

AI Gateway

- MCP auth overrides now support virtual accounts in addition to users, allowing programmatic service identities to authenticate to MCP servers.

AI Engineering

- HashiCorp Vault integrations now support AppRole authentication in addition to token-based auth, and

root_pathvalidation accepts nested slash-delimited paths. - Custom S3-compatible blob storage integrations are now supported for tracing and data routing, including plain HTTP endpoints.

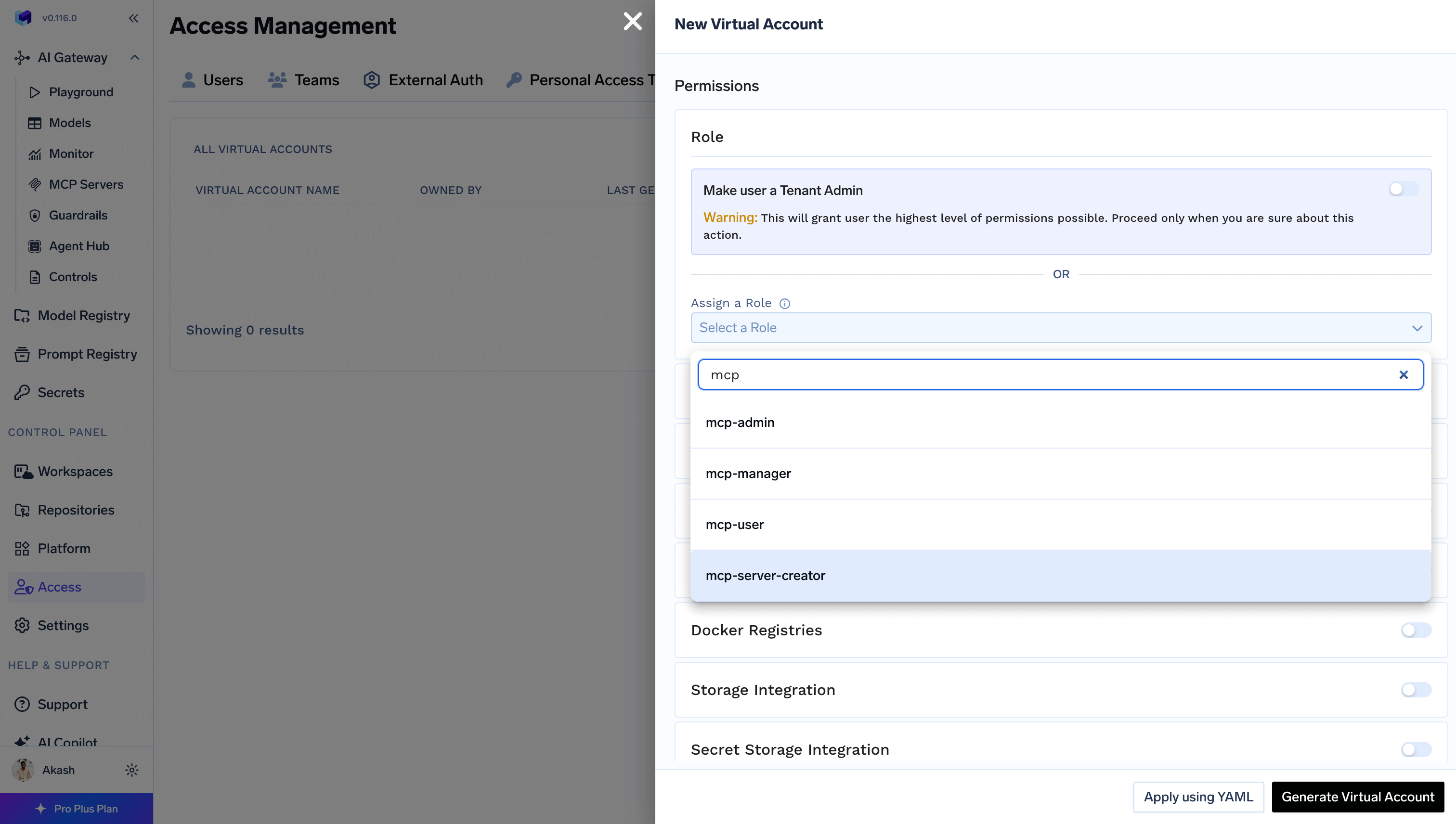

- Virtual account permissions now accept role names (not just IDs) for account-scoped roles.

Release instructions

- Update the

truefoundryHelm chart to version0.134.7. - Update the

tfy-llm-gatewaychart to version0.134.3.

Updates

AI Gateway

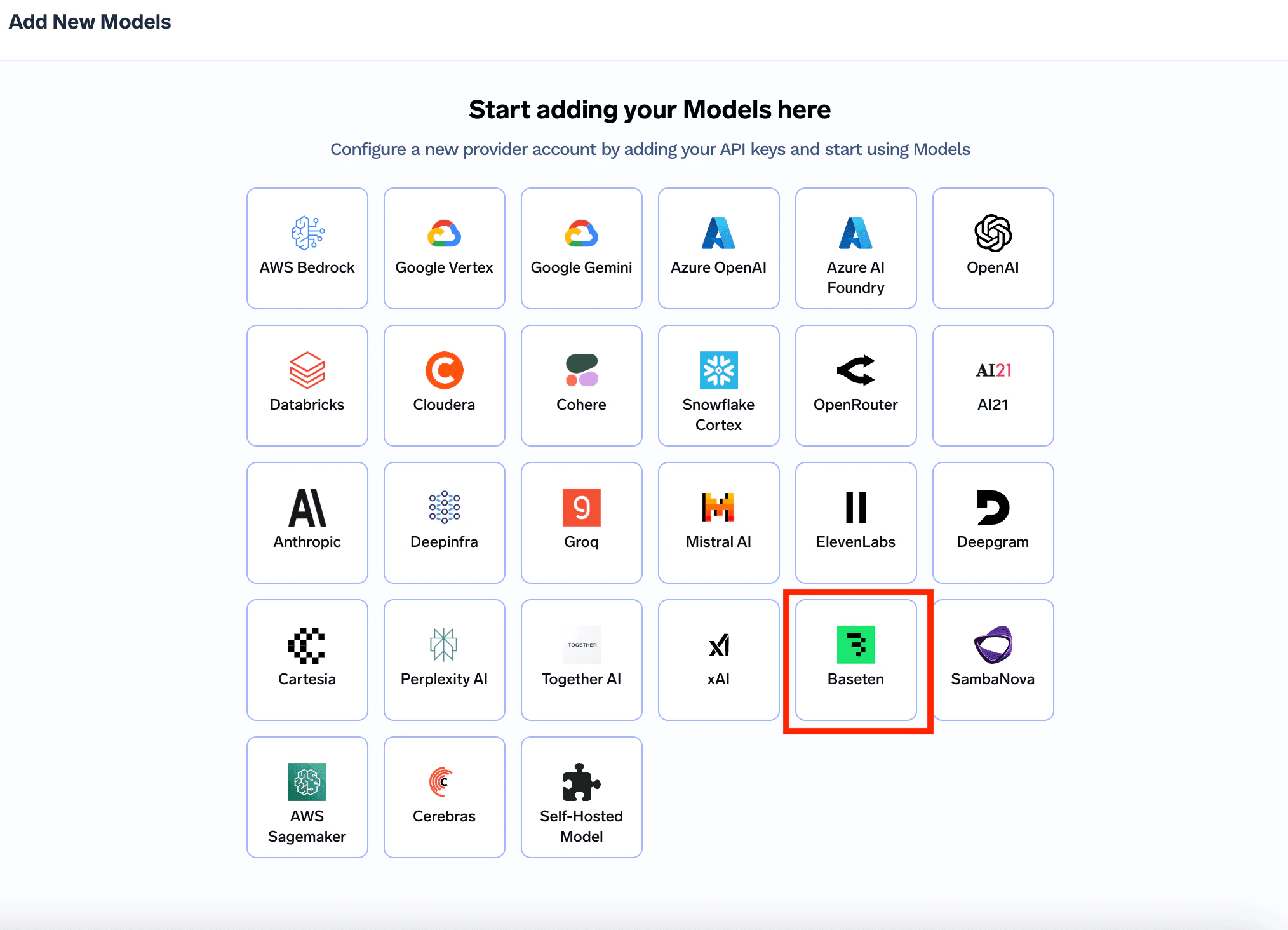

- Baseten is now available as a first-class inference provider, supporting chat completions and embeddings.

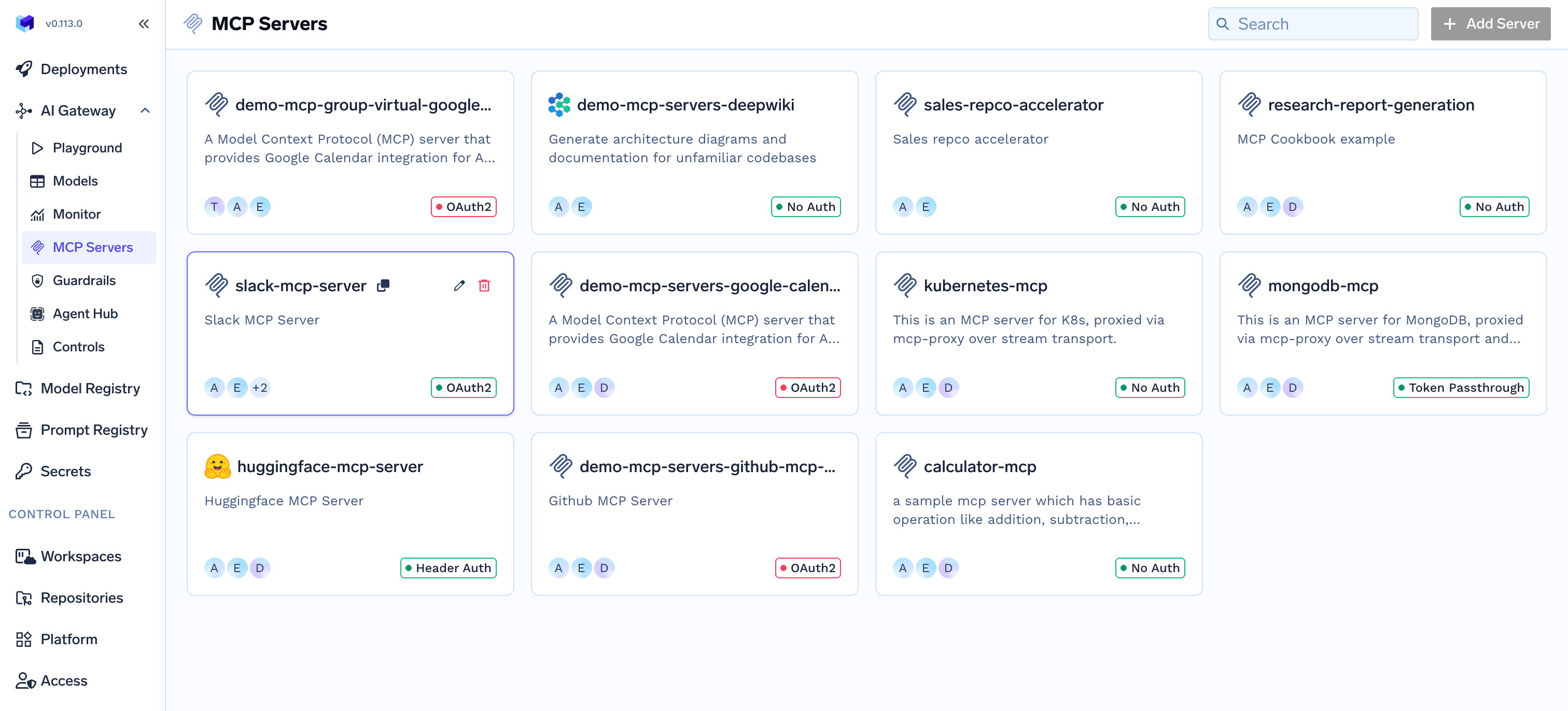

- MCP servers now support per-user header-based auth overrides, allowing individual users to supply their own API tokens for MCP servers that require per-user credentials. MCP auth overrides are enabled by default.

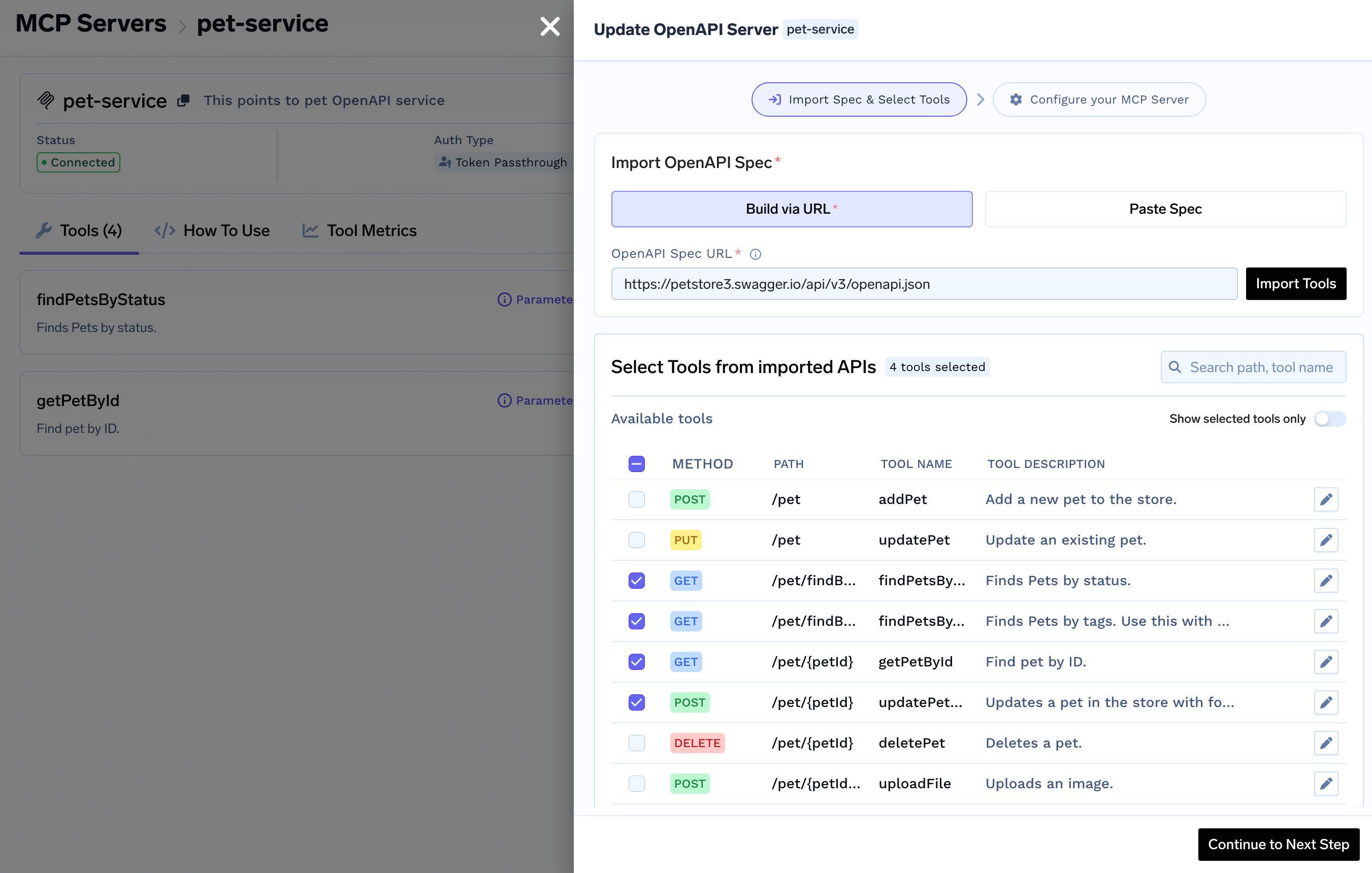

- OpenAPI-backed MCP servers are now processed through the full gateway hook pipeline, ensuring guardrails, auth, and tool settings apply consistently with remote MCP servers.

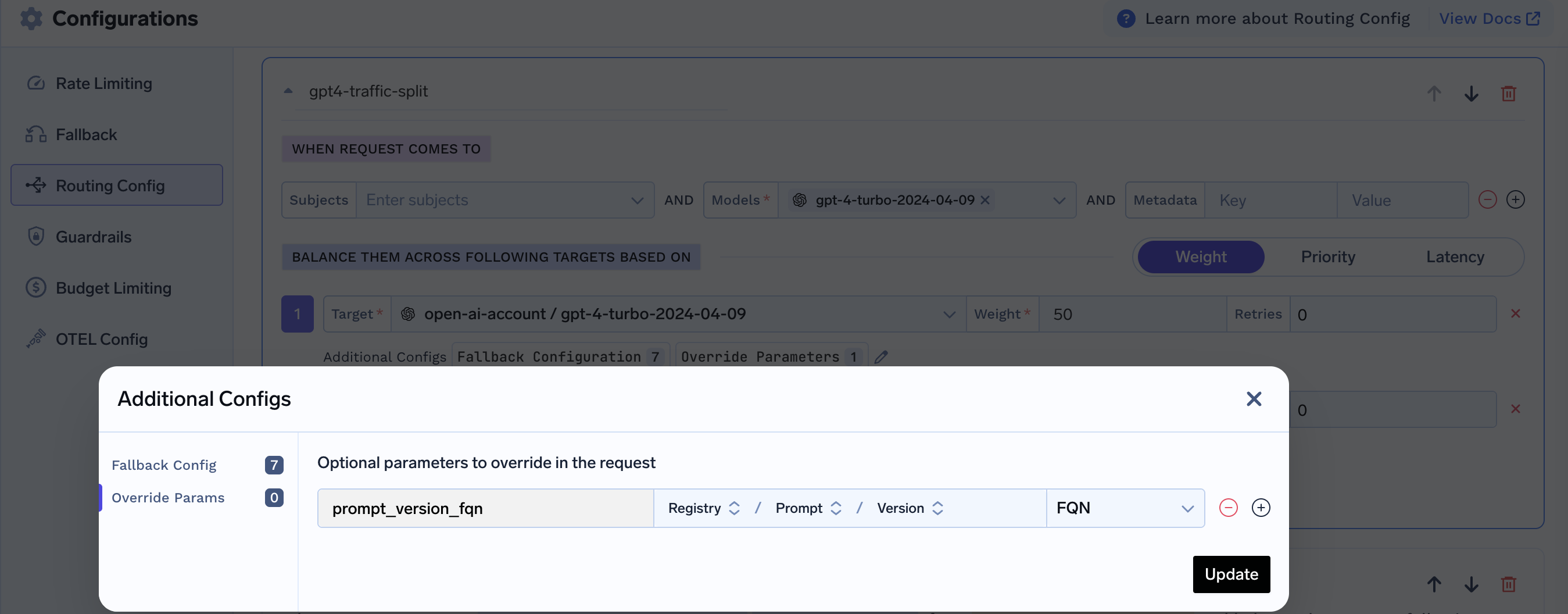

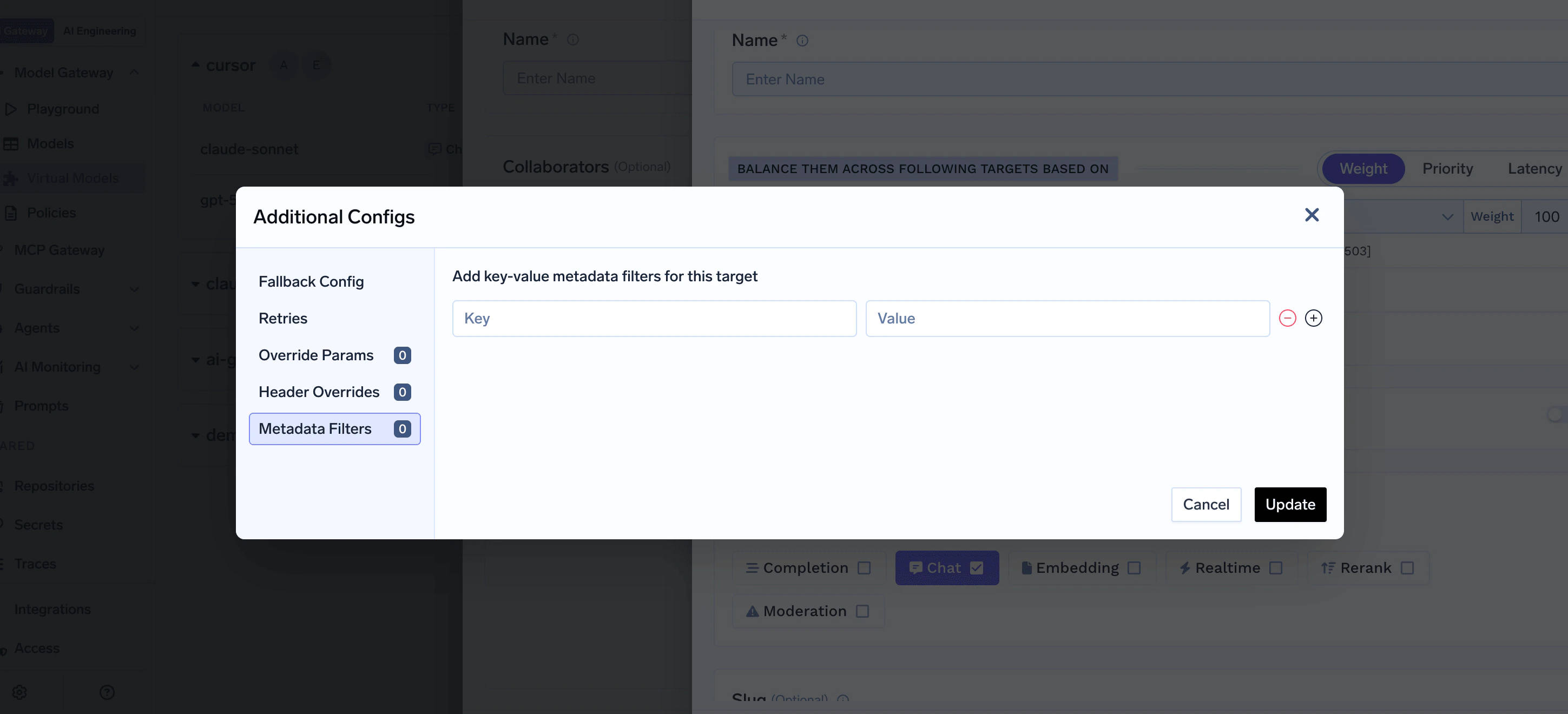

- Virtual model routing targets can now include

metadata_matchconditions, so only targets whose key/value constraints match the incoming request metadata headers are eligible for routing.

- TLS and proxy support is now available for remote MCP server connections, configurable via manifest

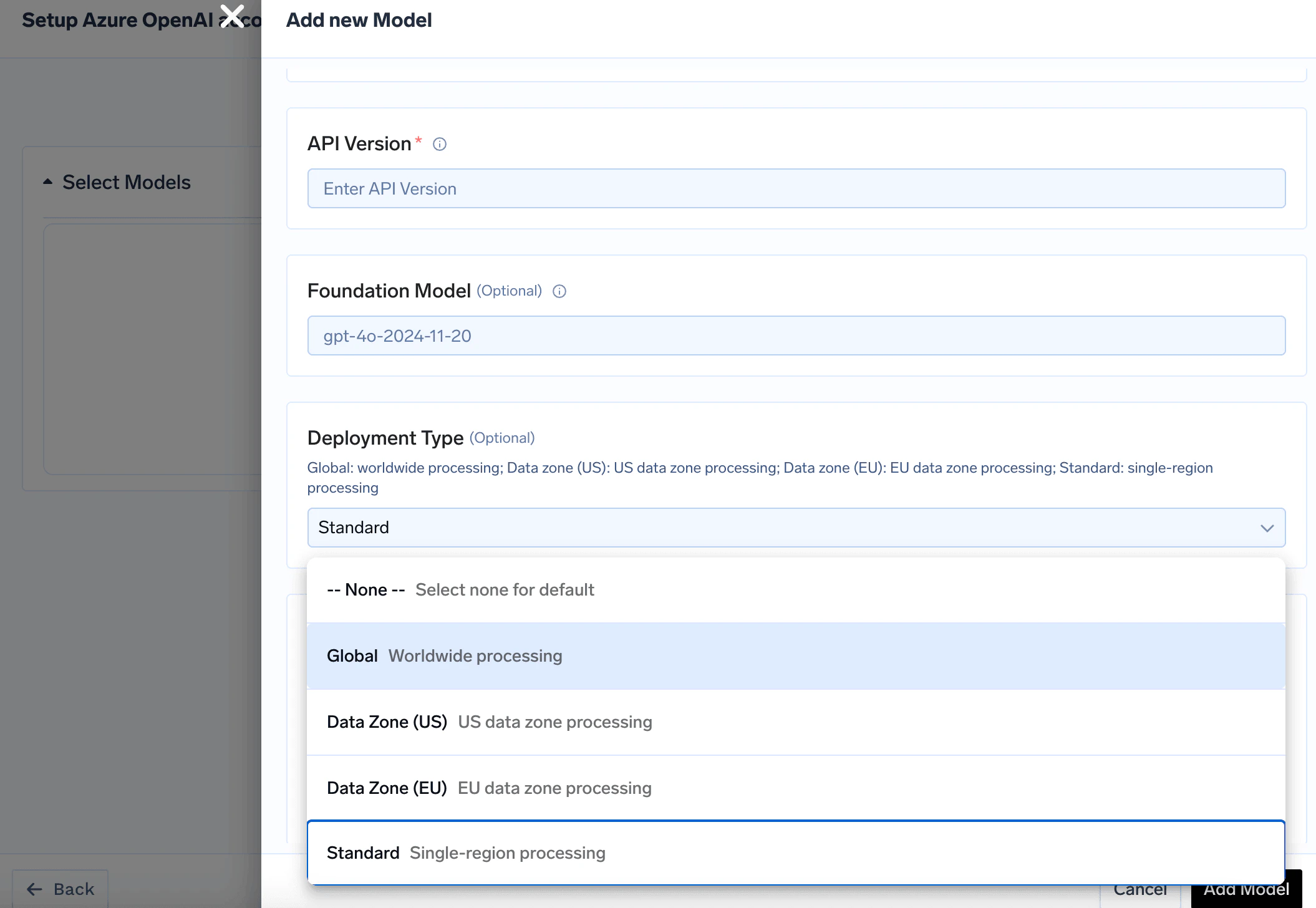

tls_settings. - Azure OpenAI deployment configuration has been reworked — the previous region and data-zone fields are replaced by a

deployment_typeselector (global,datazone_us,datazone_eu,standard), and pricing lookup is updated accordingly.

- The

x-tfy-routing-configrequest header is no longer accepted for routing overrides. Routing configuration is now resolved exclusively from virtual model config, prompt config, or tenant-level load-balance settings. Clients that relied on this header must migrate to one of those mechanisms.

AI Engineering

- GCR (

gcr.io) container registries are now supported alongside Artifact Registry for GCP Docker registry integrations. - The OpenTelemetry collector now accepts traces and metrics over gRPC (port 4317) in addition to HTTP.

- Platform policies can now be applied to

volumemanifests, extending enforcement and mutation support to volume deployments.

Release instructions

- Update the

truefoundryHelm chart to version0.133.3. - Update the

tfy-llm-gatewaychart to version0.133.0.

Updates

AI Gateway

- Google Model Armor guardrails now support a

mutateoperation mode that can redact or transform request and response content via sensitive data protection, in addition to the existingvalidatemode. - MCP tool-call guardrails are now enforced on SSE streaming responses in addition to JSON responses, with consistent blocking and error handling across both transports.

- The PromptFoo guardrail integration has been removed from both the gateway and the control plane.

- Speech-to-text proxy support has been added for Vertex, with a corresponding code snippet available in the playground.

- Added support for sticky routing for virtual models.

- Guardrail requests to Palo Alto can now include custom metadata fields (e.g. AI model name, app user, app name) via a configurable

metadata_key_mapping - Fixed structured output (

response_format: json_schema) handling for Anthropic models on Vertex. - Added support for disabling individual tools on an MCP Server

- New code snippet integrations have been added for the playground, including Pydantic, Agno, CrewAI, Instructor, Langroid, OpenAI Agents, OpenAI Swarm, Strands Agents, and Codex.

- New format for MCP Gateway endpoint.

AI Engineering

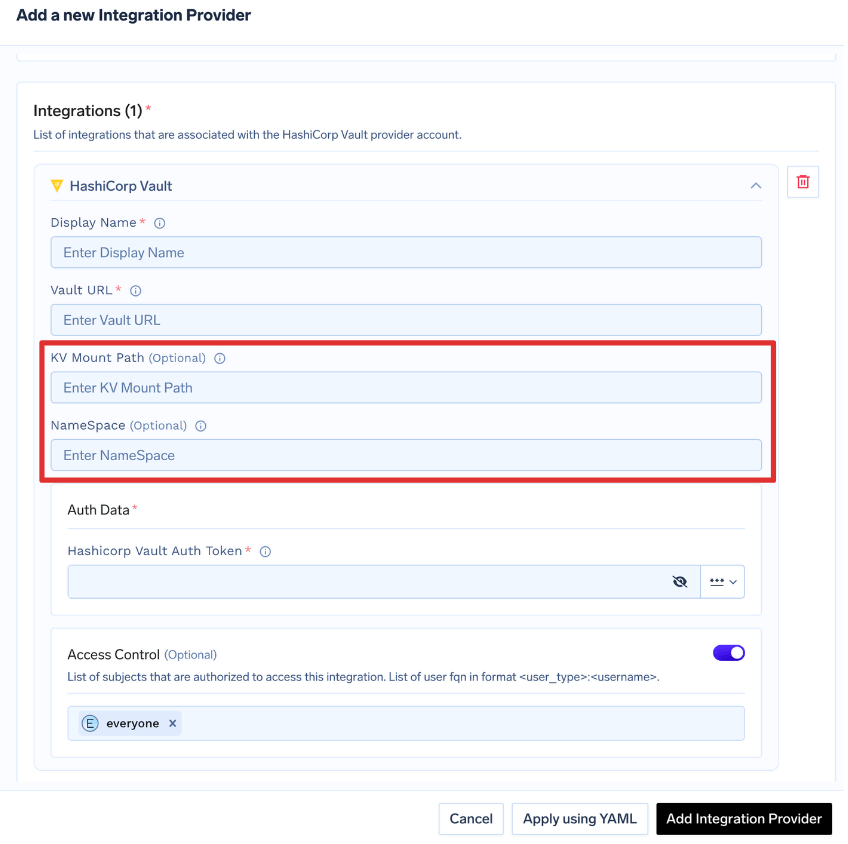

- HashiCorp Vault integrations now require

kv_mount_pathand support an optionalroot_path.

Breaking Change: Hashicorp Integration

Breaking Change: Hashicorp Integration

kv_mount_pathis required parameter- Key path used in Secret FQN should be updated to following path:

<root_path>/<secretPath>/<secretKeyInJSON>.

| Existing | New | |

|---|---|---|

| Model Integration Auth Data | tenant1:hashicorp:secret-store:vault::kv1/data/my-root-path/my-secret-path/my-secret-key | tenant1:hashicorp:secret-store:vault::my-root-path/my-secret-path/my-secret-key/value |

| Virtual Account Sync Secret | kv1/data/my-root-path/my-secret-path/my-secret-key | my-root-path/my-secret-path/my-secret-key/value |

- SCIM provisioning is now supported for SAML-based external OAuth identity providers.

- Additional AWS instance families are now available for EKS cluster provisioning, including Graviton-based (T4g, M6g/M7g, C6g/C7g, R6g/R7g), ML accelerator (Trn1, Inf2), and newer GPU families (P5e, P6-B200, G6e, G7e).

- A generic custom secret store integration is now available as an alternative to the built-in TrueFoundry DB or HashiCorp Vault secret backends.

- Added Support for Databricks Job Task in Flyte workflows to trigger Databricks jobs from Flyte workflows.

- The YAML spec/apply workflow has been removed from platform settings configuration drawers; settings are now edited exclusively through the form UI.

- Simplified GitOps CI/CD —

tfy applynow supports directory-level--diffs-onlywith automatic dependency resolution, replacing complex per-file CI/CD scripts with a single command. Dry runs now succeed even when a PR adds interdependent resources. Read more

Release instructions

- Update the

truefoundryHelm chart to version0.132.5. - Update the

tfy-llm-gatewaychart to version0.132.2.

Updates

- Added support for time-to-first-token(TTFT) based timeout using header

x-tfy-ttft-timeout-ms. Read more - Added support for finetuned model integration in Google Vertex AI.

- Added support to configure custom KMS Key ARN in AWS Secret Manager/AWS Parameter Store for Secret Store integration.

Release instructions

- Update

truefoundryhelm chart version to0.127.3. - Update

tfy-llm-gatewayhelm chart version to0.127.0.

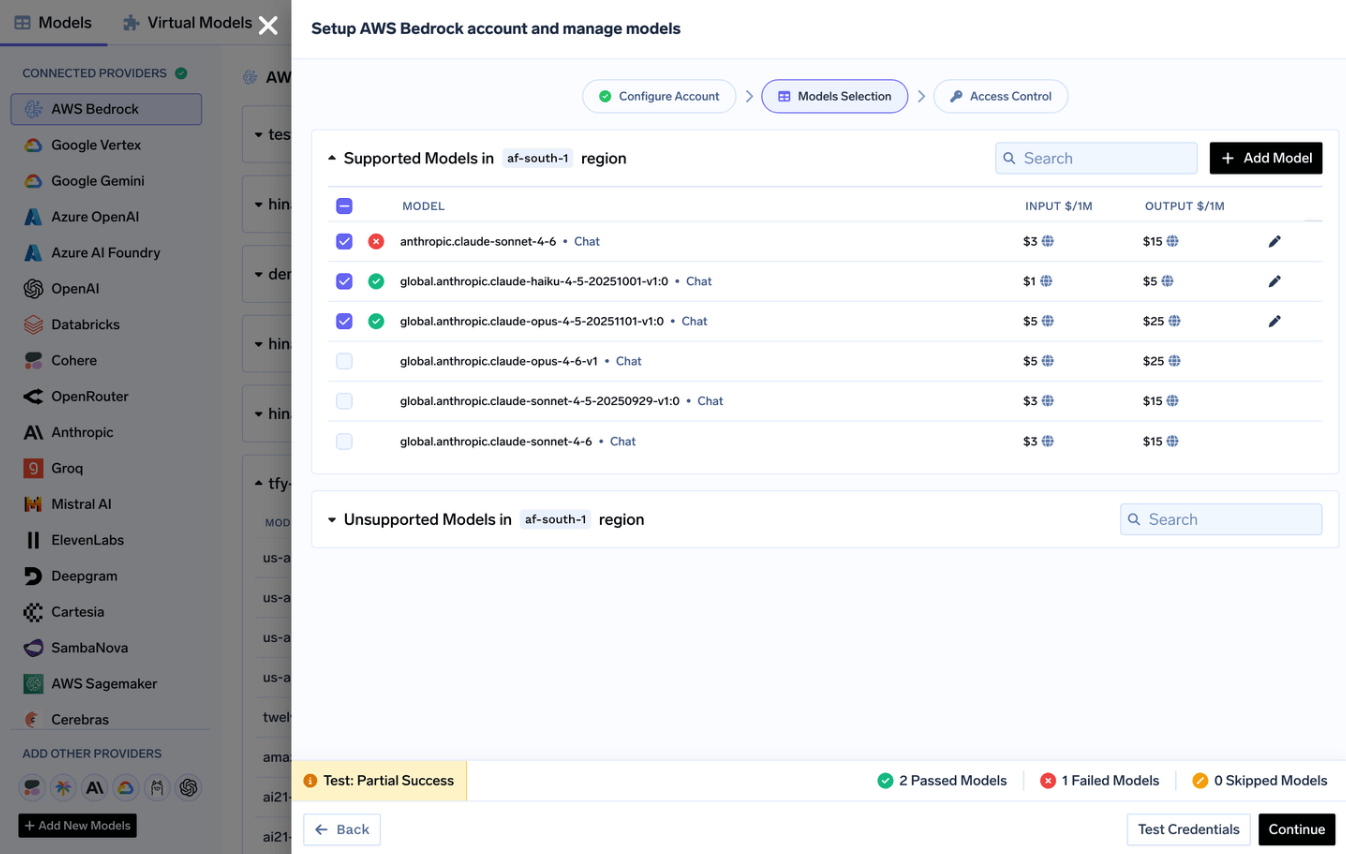

New: Enhanced Model integration flow allowing to verify the models before enabling

Get easy option to verify if the models are working before adding them to your Integration. This helps resolve any authentication or infra issue before showing the models to your end users.

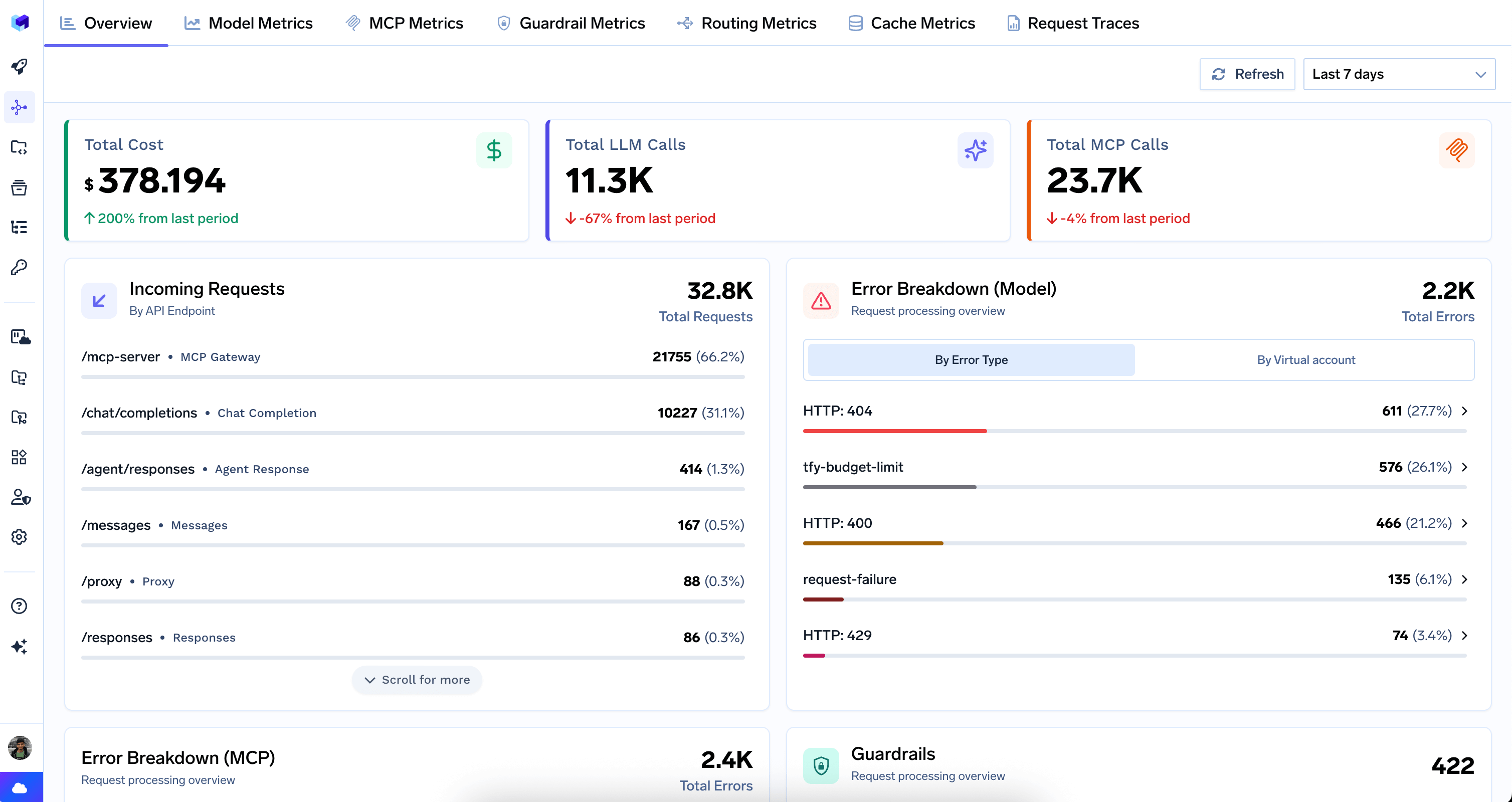

Overview dashboard for AI Gateway

Get a high-level summary of your AI Gateway’s health, cost, errors, and top usage patterns Read more

Updates

- Separate our configs for Google Gemini and Vertex in Gemini CLI (https://www.truefoundry.com/docs/ai-gateway/gemini-cli#migrating-from-gemini-config-to-vertex-ai-config)

- Added support for Google Model Armor Guardrails (https://www.truefoundry.com/docs/ai-gateway/google-model-armor#google-model-armor-guardrail-integration)

- Added Rate limit, Budget limit support for Gemini CLI

- Added support for live/realtime API in Gemini, OpenAI, Azure. Read more

- Bugfix: flow Gemini thinking tokens in response.

- Added support for custom

base_urlin GraySwan Cygnal Guardrail. - Improved performance of AI Gateway Tracing to show live logs without delay.

Release instructions

- Update

truefoundryhelm chart version to0.126.2. - Update

tfy-llm-gatewayhelm chart version to0.126.2.

Updates

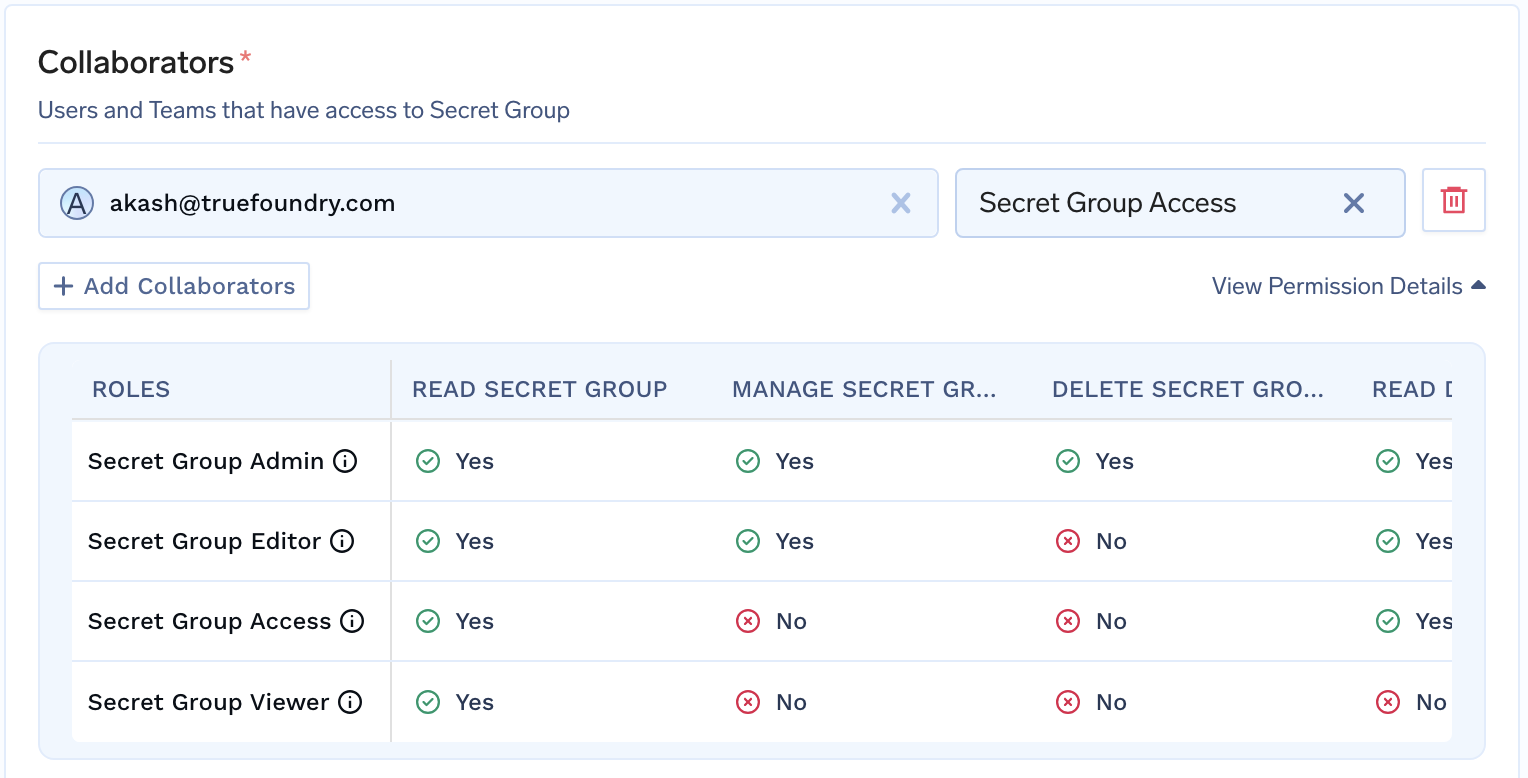

- Added new role

Secret Group Accessfor Secret Group to allow users to only read secret value but not update.

- Added support for Validation in PII Guardrails.

-

Added support for TTS (

Text-to-Speech) & STT (Speech-to-Text) Models for Groq. TTS STT - Added support for Realtime models in Google Gemini model. Read more

-

Added support for

compactionAPI for OpenAI. Read more

Release instructions

- Update

truefoundryhelm chart version to0.125.10. - Update

tfy-llm-gatewayhelm chart version to0.125.2. Requires following update in the values file if being installed as standalone application:- Set

global.tenantNameas the name of tenant - Set

global.controlPlaneURLas the Control Plane URL - Remove any URL set under

env

- Set

Updates

- Added support for TTS (

Text-to-Speech) & STT (Speech-to-Text) Models for ElevenLabs, Cartesia, Deepgram, Vertex AI, Gemini. TTS STT - Added support for Deepgram Model Integration. Read more

- Added support for Cartesia Model Integration. Read more

- Added support for ElevanLabs Model Integration. Read more

- Enable

tfy applyfor MCP Server integrations.

Release instructions

- Update

truefoundryhelm chart version to0.122.3. - Update

tfy-llm-gatewayhelm chart version to0.122.0.

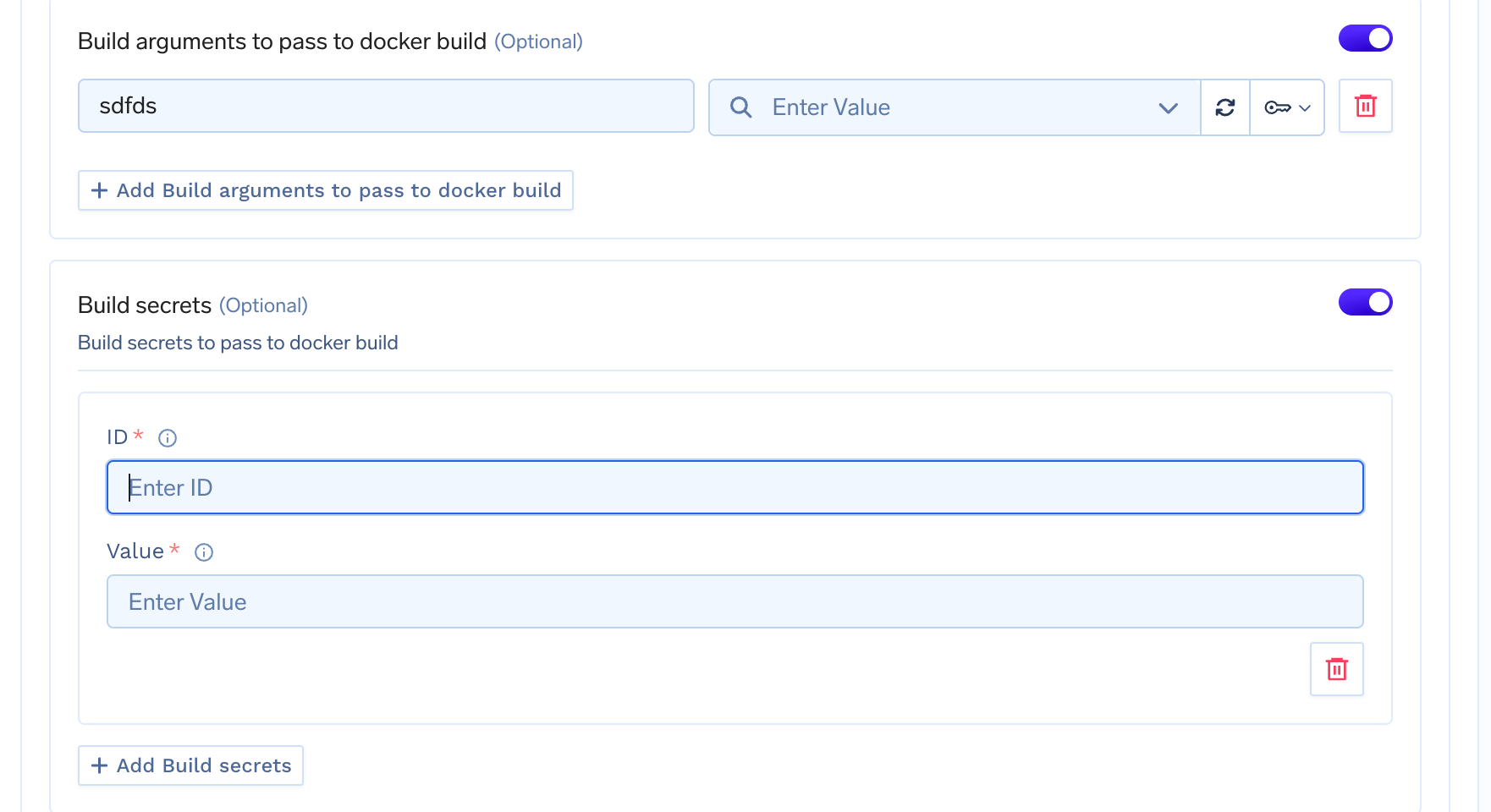

New: Added support for Build Secret in Dockerfile Deployment

Docker build secrets allow you to securely pass sensitive information like private repository credentials, API keys, or authentication tokens during the Docker image build process. Read more

More Updates

- Improvements in Hashicorp Vault integration:

- Added support for custom KV mount path

- Added support for custom Namespace

Release instructions

- Update

truefoundryhelm chart version to0.121.0. - Update

tfy-llm-gatewayhelm chart version to0.121.0.

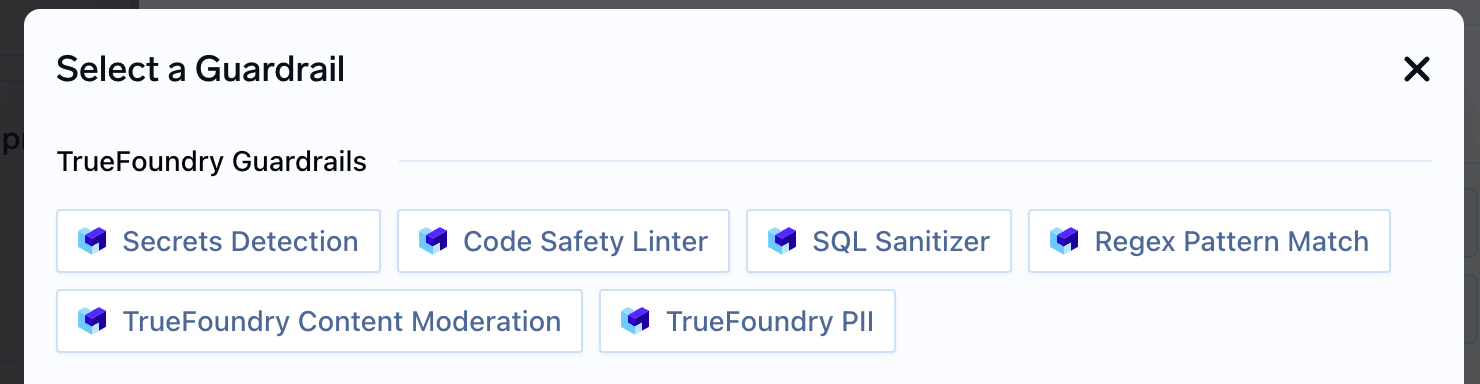

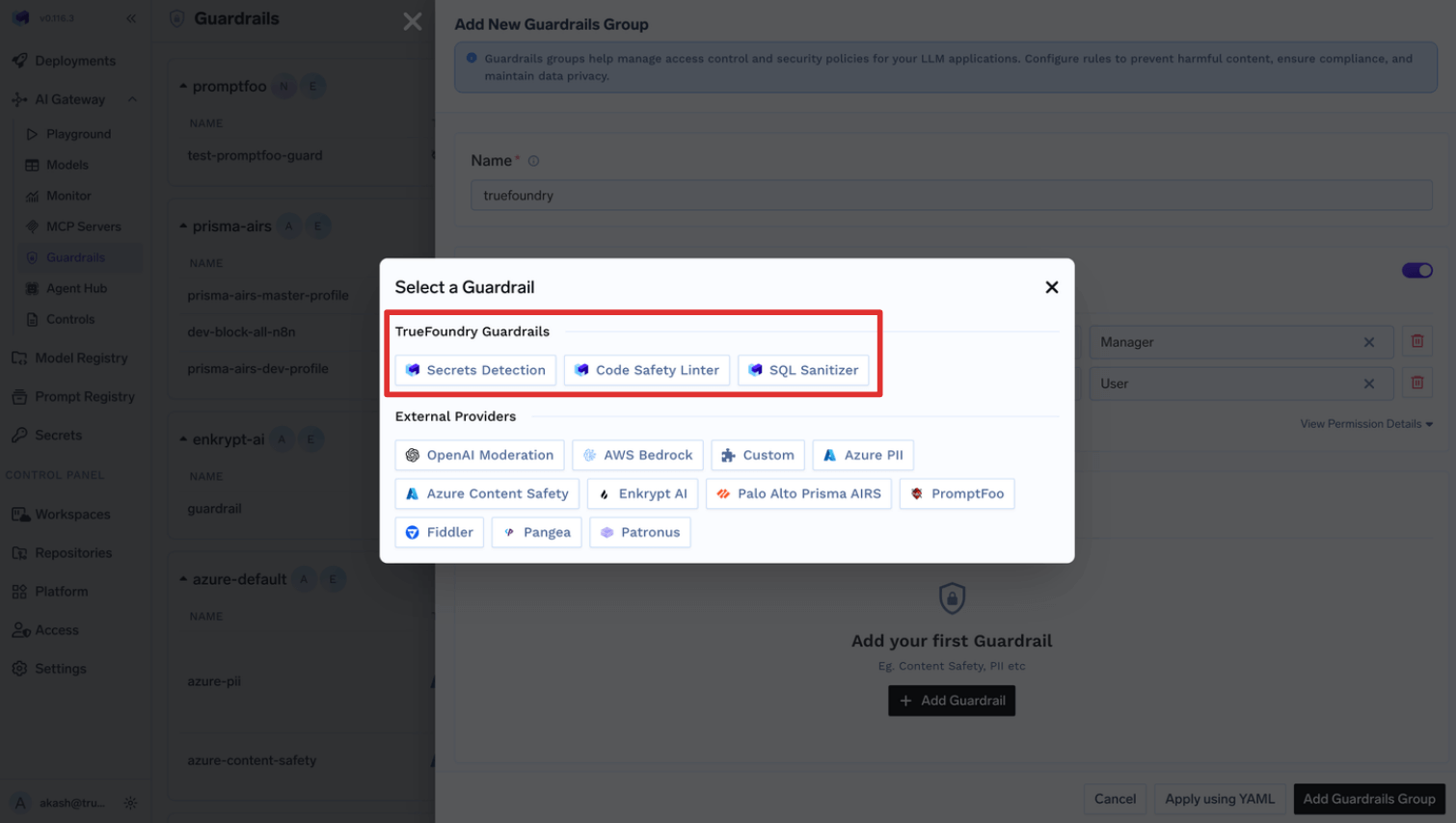

New: More TrueFoundry managed Guardrails

We are expanding our TrueFoundry managed Guardrails with addition of Regex Pattern Match, TrueFoundry PII, & Content Moderation Guardrails. (Only available in SaaS AI Gateway)

More Updates

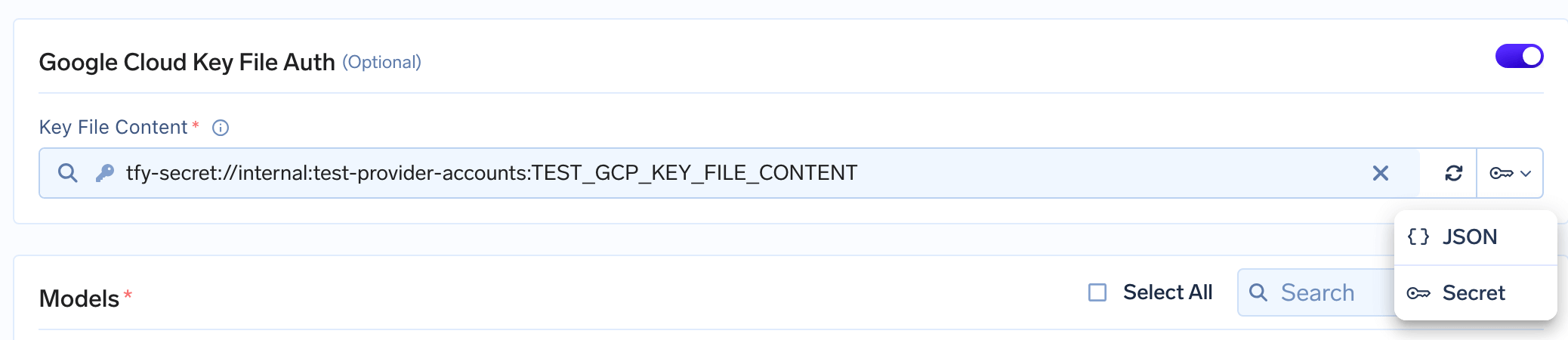

- Improved: Cost Attribution for Gemini CLI

-

Improved: Google GCP Integrations now support giving Key File Content as TrueFoundry Secret FQN.

Release instructions

- Update

truefoundryhelm chart version to0.118.2.

New: TrueFoundry managed Guardrails

We are releasing TrueFoundry managed Guardrails such as Secret Detection, Code Safety Linter & SQL Sanitizer and will be adding more soon.

More Updates

- New: Added support for configuring Embedding Model for Semantic Caching in AI Gateway Requests. Read more

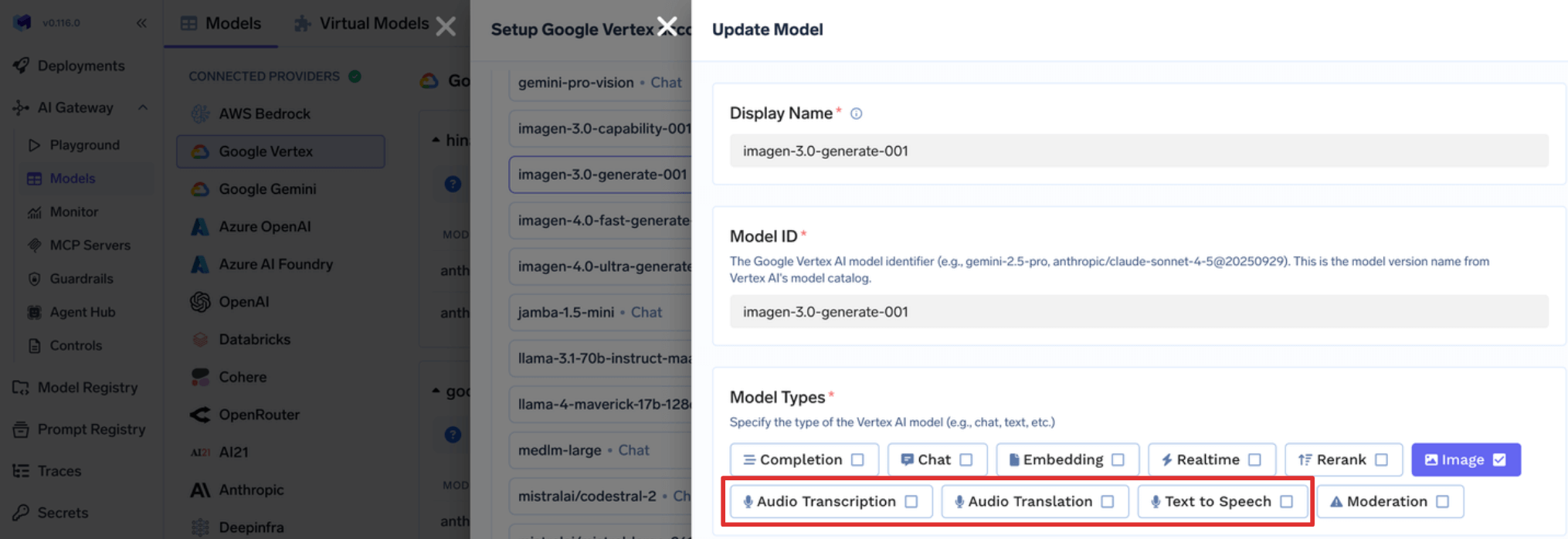

- New: Added support for Audio Transcript, Audio Translation & Text to Speech HTTP APIs in Google Vertex models.

Release instructions

- Update

truefoundryhelm chart version to0.117.2.

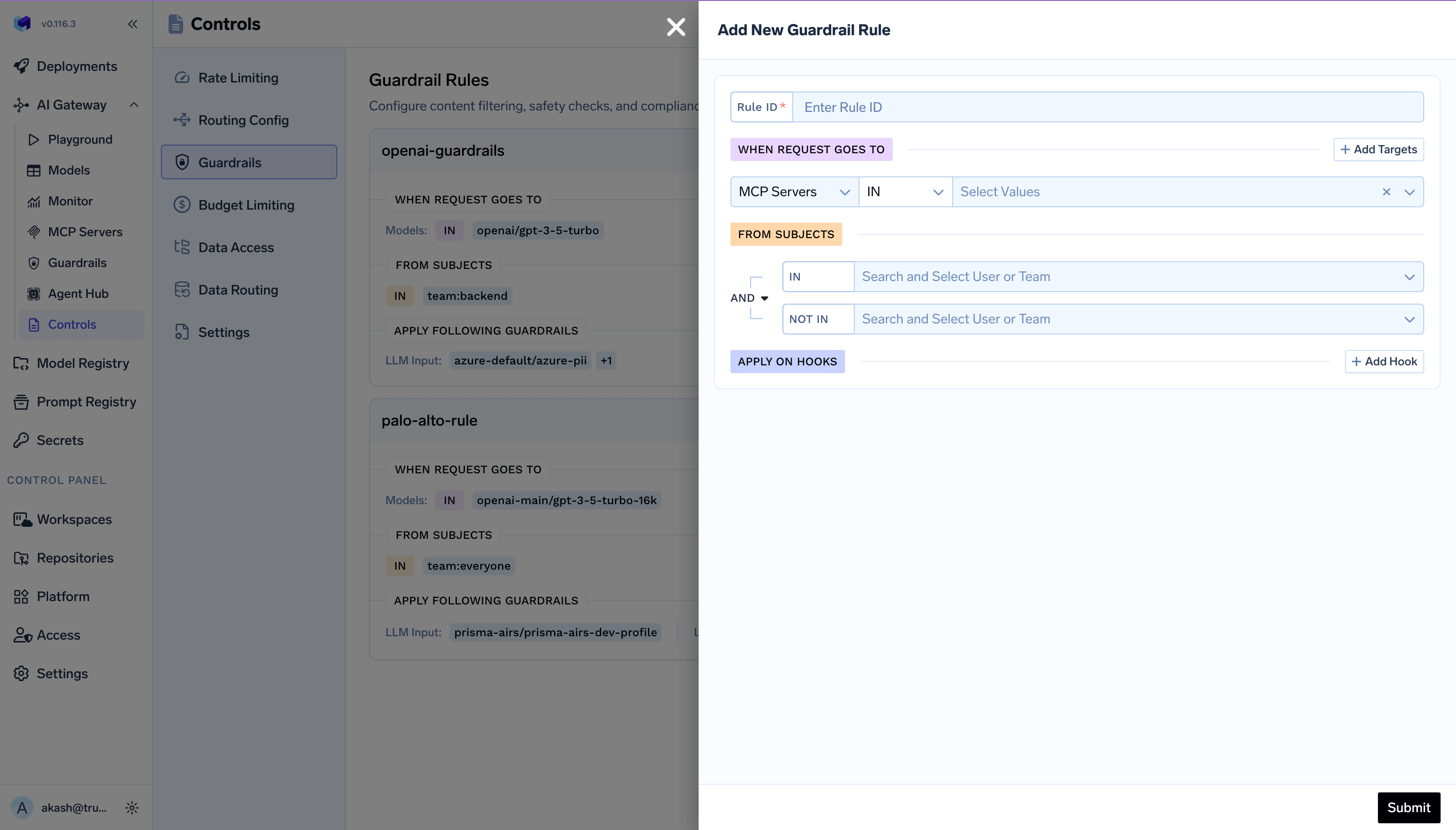

Improvement: MCP Guardrails and Guardrail Config Schema Change

We have launched a feature to enable MCP Guardrails. This allows us to configure policies at the Gateway layer to apply certain guardrails to specific MCP servers and models.

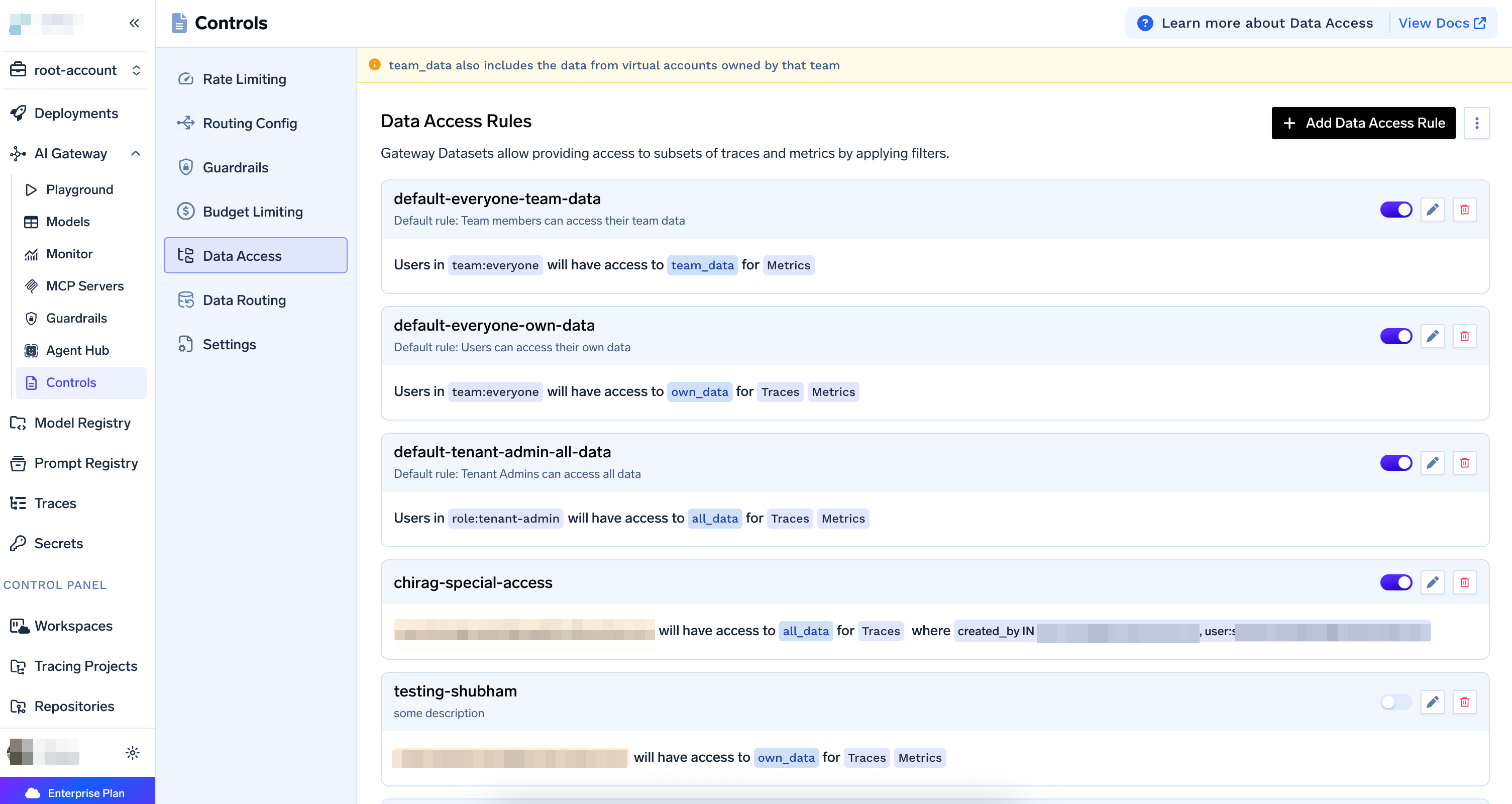

New: Data Access Rules for AI Gateway Request Logs and Metrics

Data Access Rules allow you to control who can access which request logs and metrics in the AI Gateway. Gateway Datasets provide access to subsets of traces and metrics by applying filters, enabling fine-grained access control based on users, teams, roles, and data scopes.

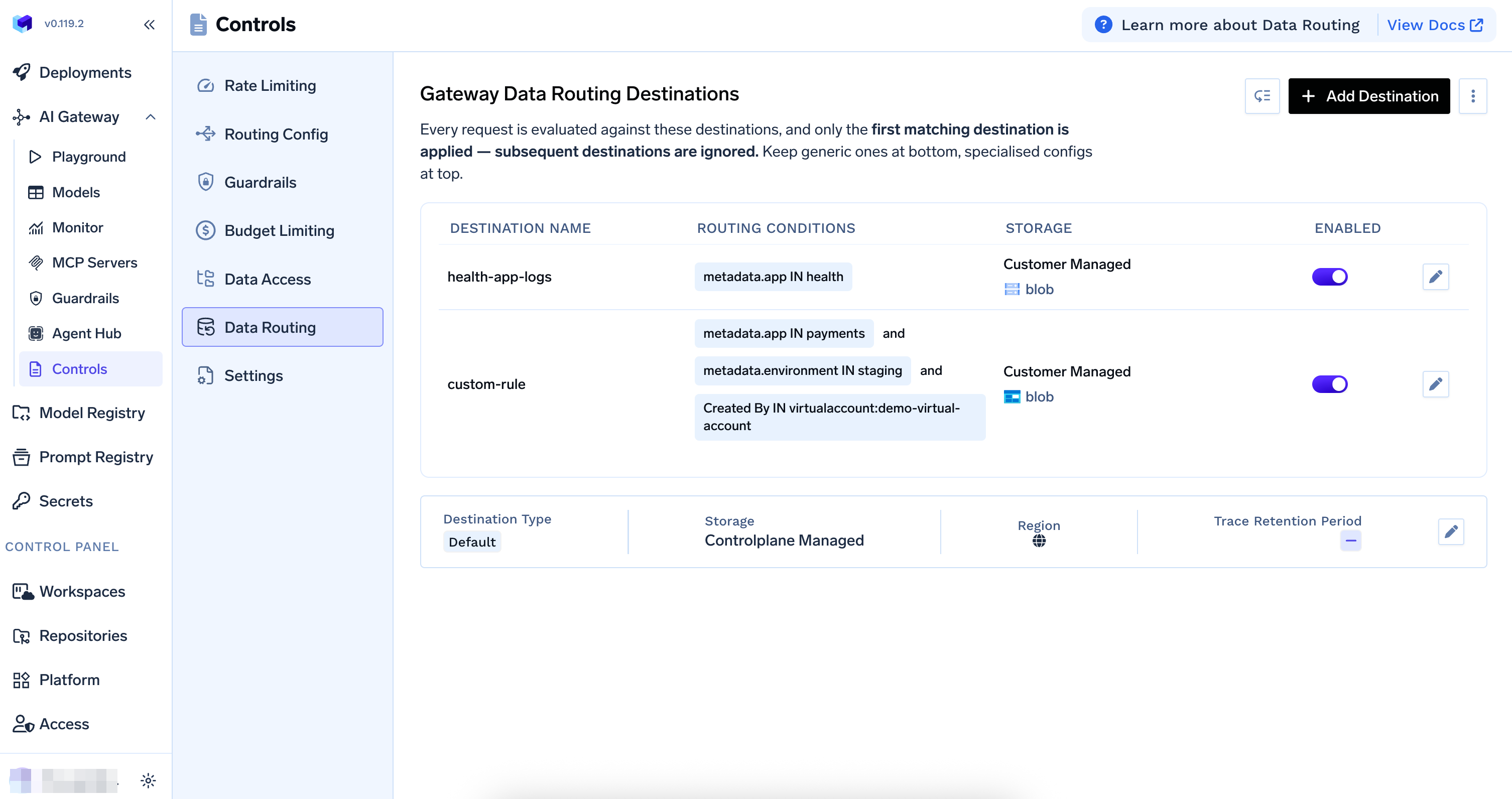

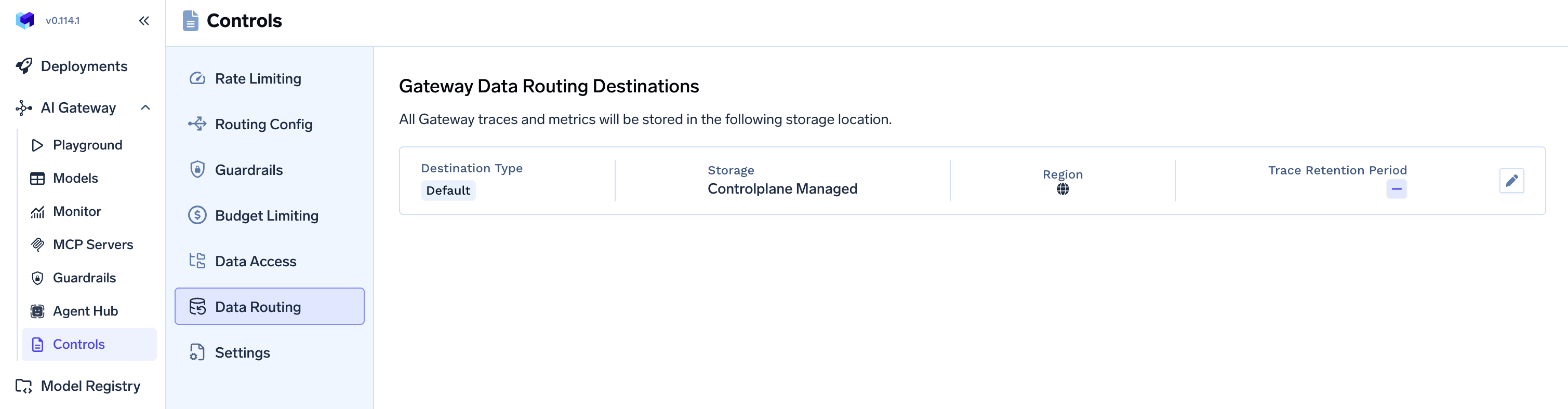

Improvement: Data Routing Rules to Configure Storage For AI Gateway Request Logs and Metrics

Data Routing Rules allows you to configure where your request logs (traces) and metrics are stored. You can choose between control plane managed storage or bring your own customer-managed storage. Read more

More Updates

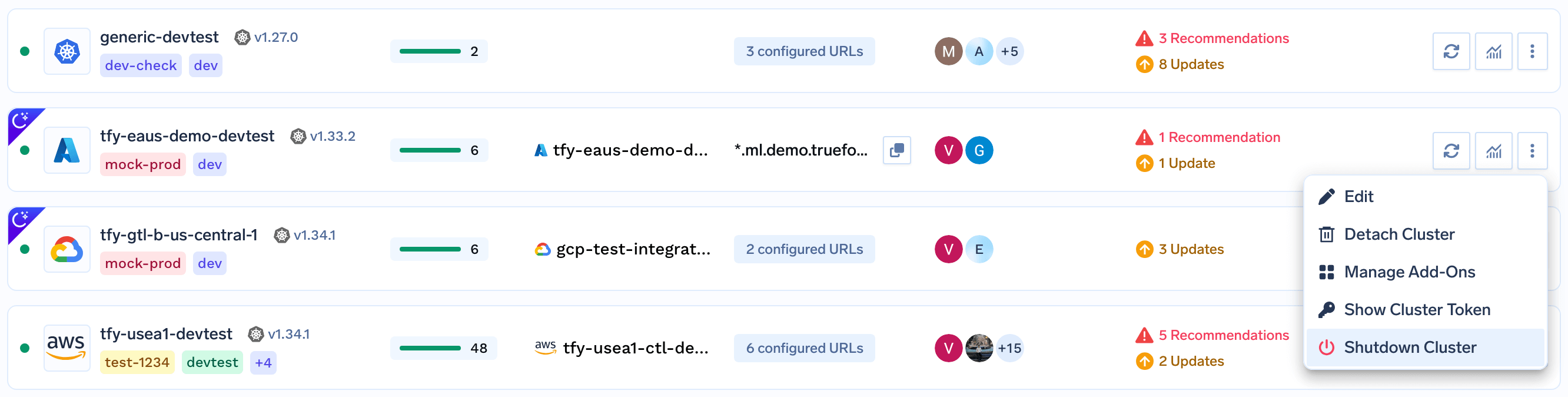

- Change: Revamp of

External Identityby introducingIdentity Provider. Read more - New: Added support to

Shutdowncluster connected to TrueFoundry.

- Behavior Change: Team Managers can now view all the

Virtual Accountsowned by their Team. - Allow assigning

Custom RolestoVirtual Account.

- Added support for image model in Google Gemini.

- Allow updating default roles to allow Team Managers manage their Virtual Accounts without making them Admin. Read more

- New: Create MCP Servers using OpenAPI spec. Read more

Release instructions

- Update

truefoundryhelm chart version to0.116.3.

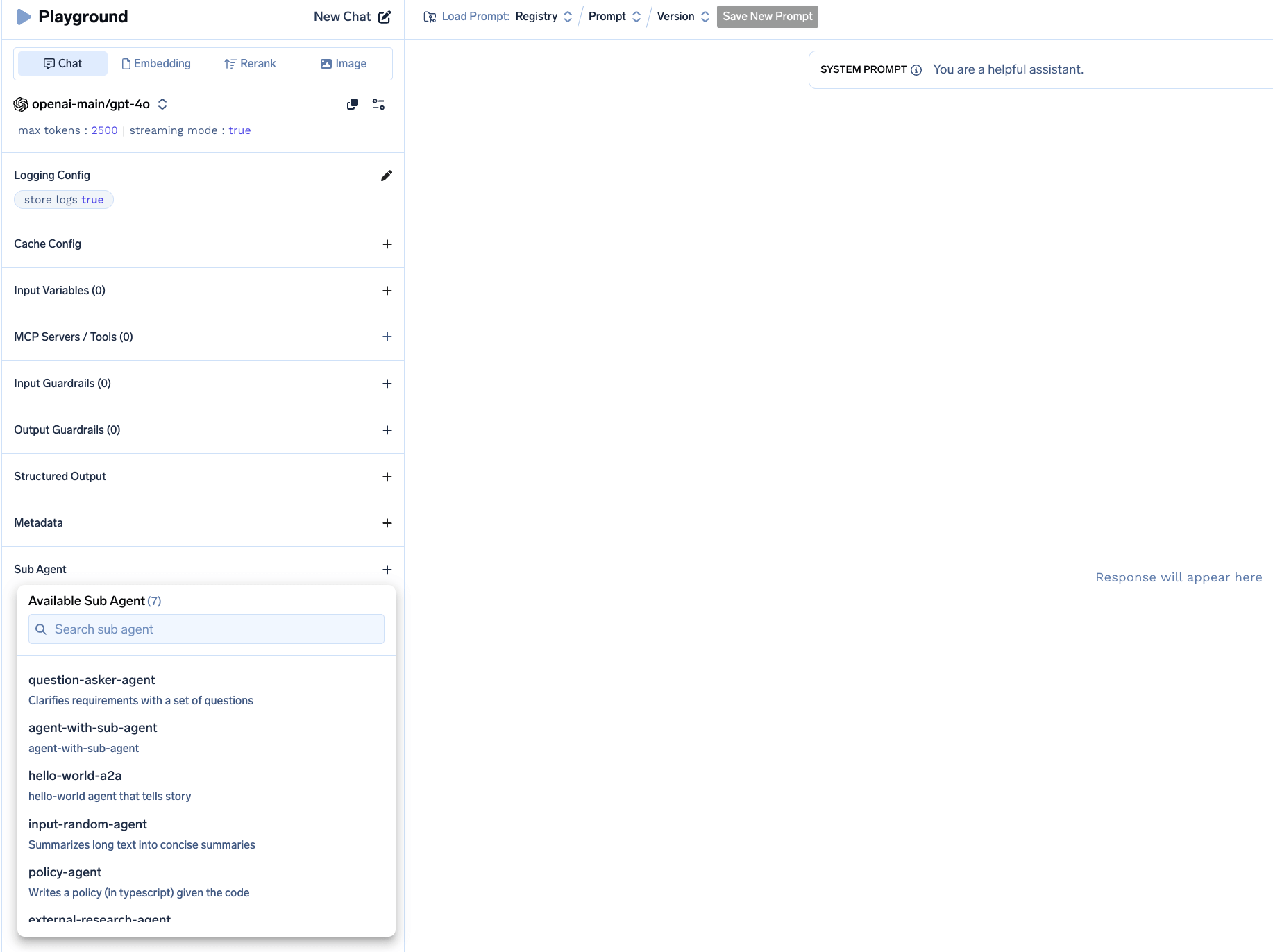

New: Allow query with multiple sub-agents

You can now use multiple sub-agents along with mcp-servers in AI Gateway and create useful super-agents. Read more

More Updates

- Added support for using MCP Server

namein MCP Gateway URL. - Added support for

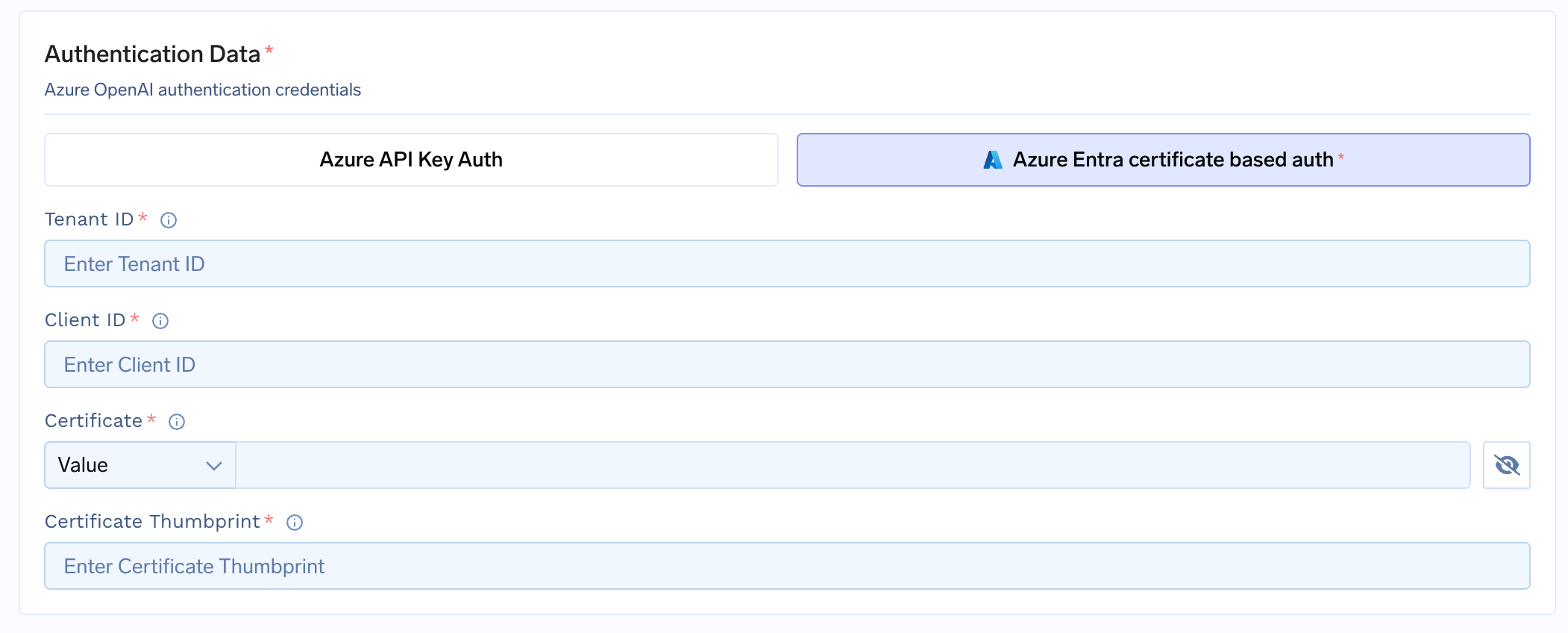

Certificatebased auth in Azure Model Integrations.

- Added support for

thinkingLevelin Gemini-3 series Models. - Added support

reasoningin Azure Anthropic Models. - Added support for customisation of base URL in Palo Alto Prisma AIRS Guardrails.

Release instructions

- Update

truefoundryhelm chart version to0.115.2.

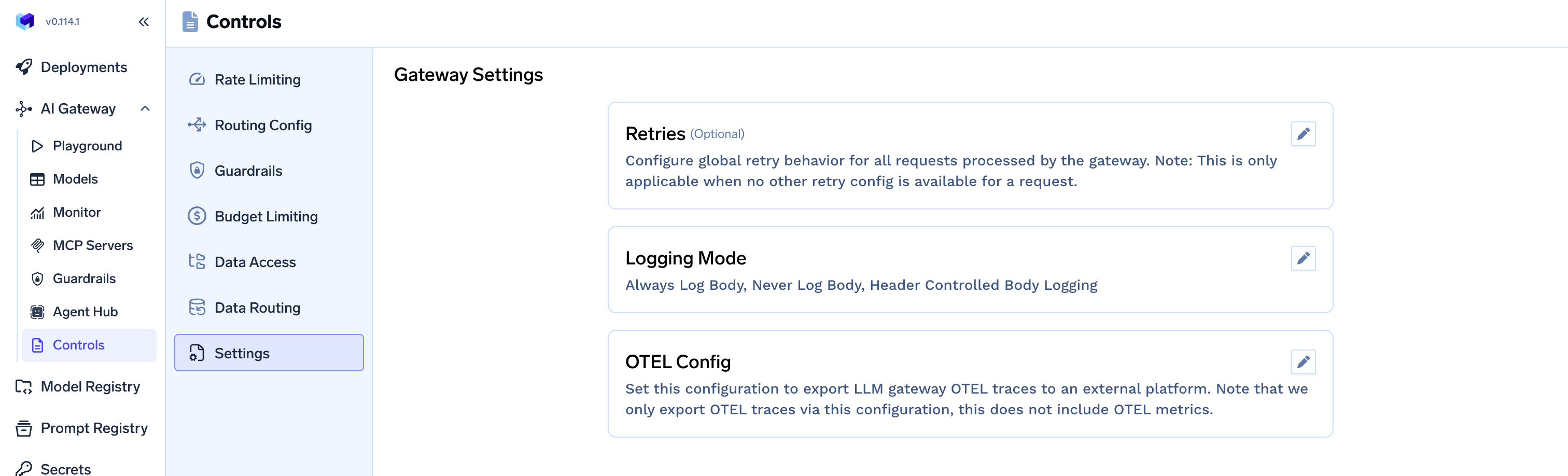

New: AI Gateway Settings

You can now configure Global AI Gateway related settings from Control. These settings Retry, Logging Mode, Additional OTEL Config.

More Updates

- Added support for Files API in Anthropic.

- Bugfix: fixed Prompt tokens calculation for Google Vertex Anthropic models.

- Added cached tokens info in Groq response.

- Added support for Anthropic models in Azure Foundry.

- Change:

AI Gateway > Controls > OTEL Configtab is merged toAI Gateway > Controls > Settings - Change:

Settings > Default Data Locationsection is moved toAI Gateway > Controls > Data Routingtab.

- Behavior Change: For workloads with Auto-Shutdown enabled along with On-Demand nodes, truefoundry now automatically adds pod disruption budget for max availbility of 25%. This reduces disruption of these workloads in case of node consolidation.

Release instructions

- Update

truefoundryhelm chart version to0.114.1.

New: TrueFoundry Agent Hub

Centralized platform for building, registering, discovering, and orchestrating AI agents within an organization. This will support building complex agents as well as registering and using pre-existing agents. Read more

More Updates

- Added support of Real-time SSE streaming for

streamable-httptransport in MCP Gateway. - Update: username and password fields are now optional for SMTP integration.

Release instructions

- Update

truefoundryhelm chart version to0.113.2.

Improvement: Simplified management for MCP Servers

MCP Server integrations are now standalone, top-level resources with their own permissions and simplified management.

More Updates

- Added support for Google Gemini image models

- Tiered pricing in Google Vertex AI models

- Behavior Change Guardrails would now run in parallel to reduce latency in AI Gateway. Read more

- Added support for certificate based auth in Azure OpenAI Integration

- Added suport for External identity in MCP Gateway

Release instructions

- Update

truefoundryhelm chart version to0.112.1.

Create your own custom roles and assign to User

You can now create your custom tenant level roles and assign to users. Read more

More Updates

- Bugfix - fixed SLA cutoff in priority based routing config

- Added support for xAI model provider in AI Gateway

- Added Request Failure metrics for tools in MCP metrics

- Behavior Change: For production GPU workloads, truefoundry now automatically adds pod disruption budget for max availbility of 25%. This reduces disruption of GPU workloads in case of node consolidation.

Release instructions

- Update

truefoundryhelm chart version to0.111.2.

New: Oauth Inbound Auth for MCP Gateway

When using MCP Servers in Cursor/VSCode, you can now use OAuth for authentication without need to hardcode the token in mcp.json. Read moreMore Updates

- Behavior Change In AWS Paramter store, we now store the secrets as

SecureStringinstead ofStringparameter type. Read more - A request body size limit added in AI Gateway requests (default: 50 MB)

- Bugfix - Fixed Assumed Role based auth for AWS Bedrock Guardrails

- Bugfix - Removed default request timeout of 5 min within AI Gateway

Release instructions

- Update

truefoundryhelm chart version to0.110.3.

New: Support for SCIM

TrueFoundry now supports SCIM for SAML based SSO. SCIM enabled automatic user/team management using IdP users/groups. Read more

Improved Rate Limit Config

- Rule IDs must be static (no

{}placeholders). Userate_limit_applies_perto create per-entity rate limit instead of dynamic rule IDs. Read more

More Updates

- Added support for API key based auth in AWS Bedrock model integration.

- Behavior Change: Tenant Admin can now access all entities(Models, MCP Server, Guardrail, Agent) in AI Gateway.

Release instructions

- Update

truefoundryhelm chart version to0.109.3.

New: Request Caching

You can now support both Exact match and Semantic caching in AI Gateway requests. Read moreMore Updates

- Error message improvement in Self-Hosted models response via AI Gateway.

- Added Embedding model support in Cloudera.

Release instructions

- Update

truefoundryhelm chart version to0.107.1.

New: Support for External Identity

You can now use externally vended JWT tokens to authenticate to TrueFoundry. Read moreMore Updates

- Fixed Gemini 3 Pro Model usage in Agent Response.

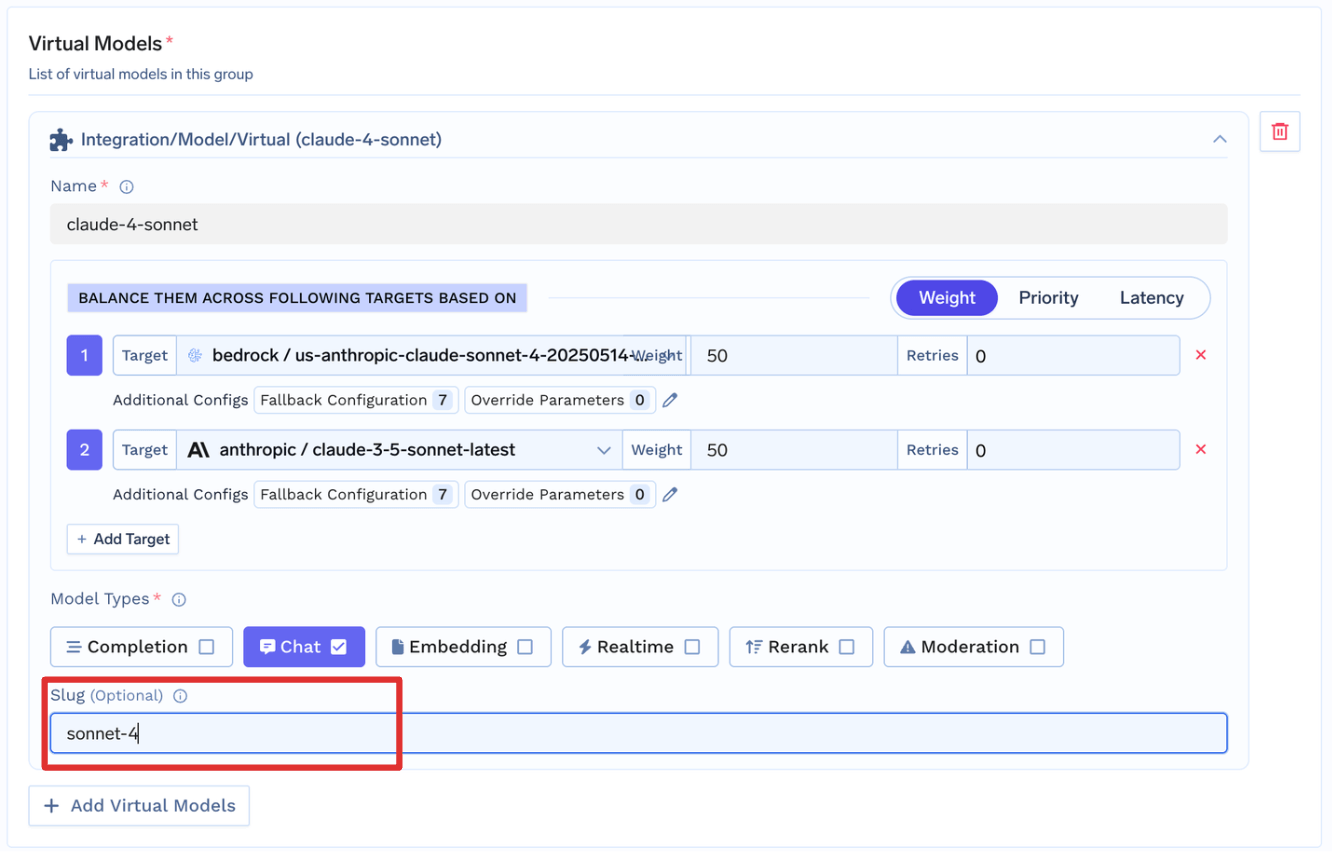

-

Added support for custom Slug in Model integrations.

- Added TrueFoundry integration with Goose. Read more

Release instructions

- Update

truefoundryhelm chart version to0.106.2.

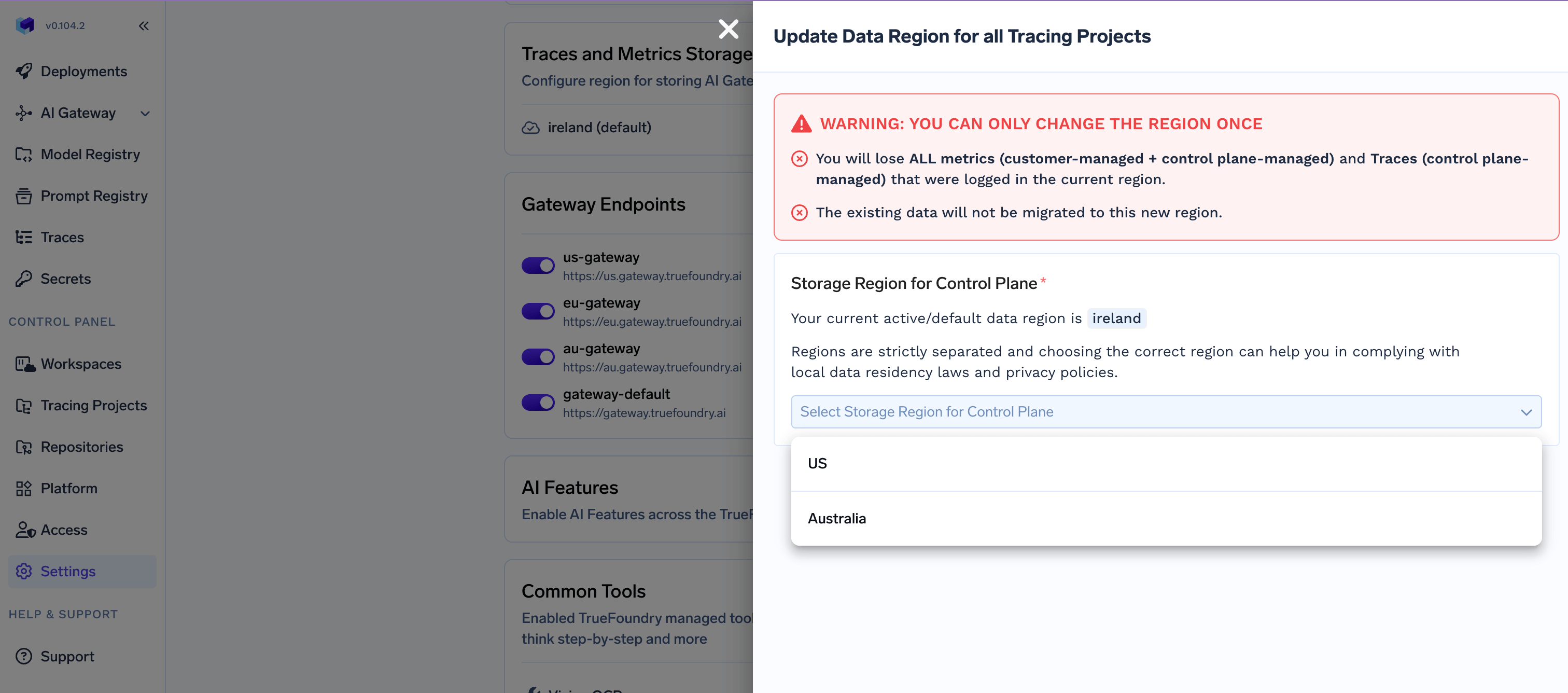

New: Configure location to store your AI Gateway Request and Metrics

We have added support to configure location on which AI Gateway Request and Metrics would be stored. This helps in complying with local Data Residency laws and privacy policies..

This feature is only available in SaaS TrueFoundry AI Gateway

Updates and Bug Fixes

- Added support for Finetune API in Vertex Model as well. Read more

- Added support for

thought_singnaturein Google Gemini and Vertex model response

Release instructions

- Update

truefoundryhelm chart version to0.104.2.

Updates and Bug Fixes

- Added

404in status codes used for default Fallback. - Added support for GCP workload identify authentication for Vertex models. Read more

- Added support for Media resolution in Vertex Models. Read more

- Added support for

noneas value inreasoning_effort. Read more

Release instructions

- Update

truefoundryhelm chart version to0.103.1.

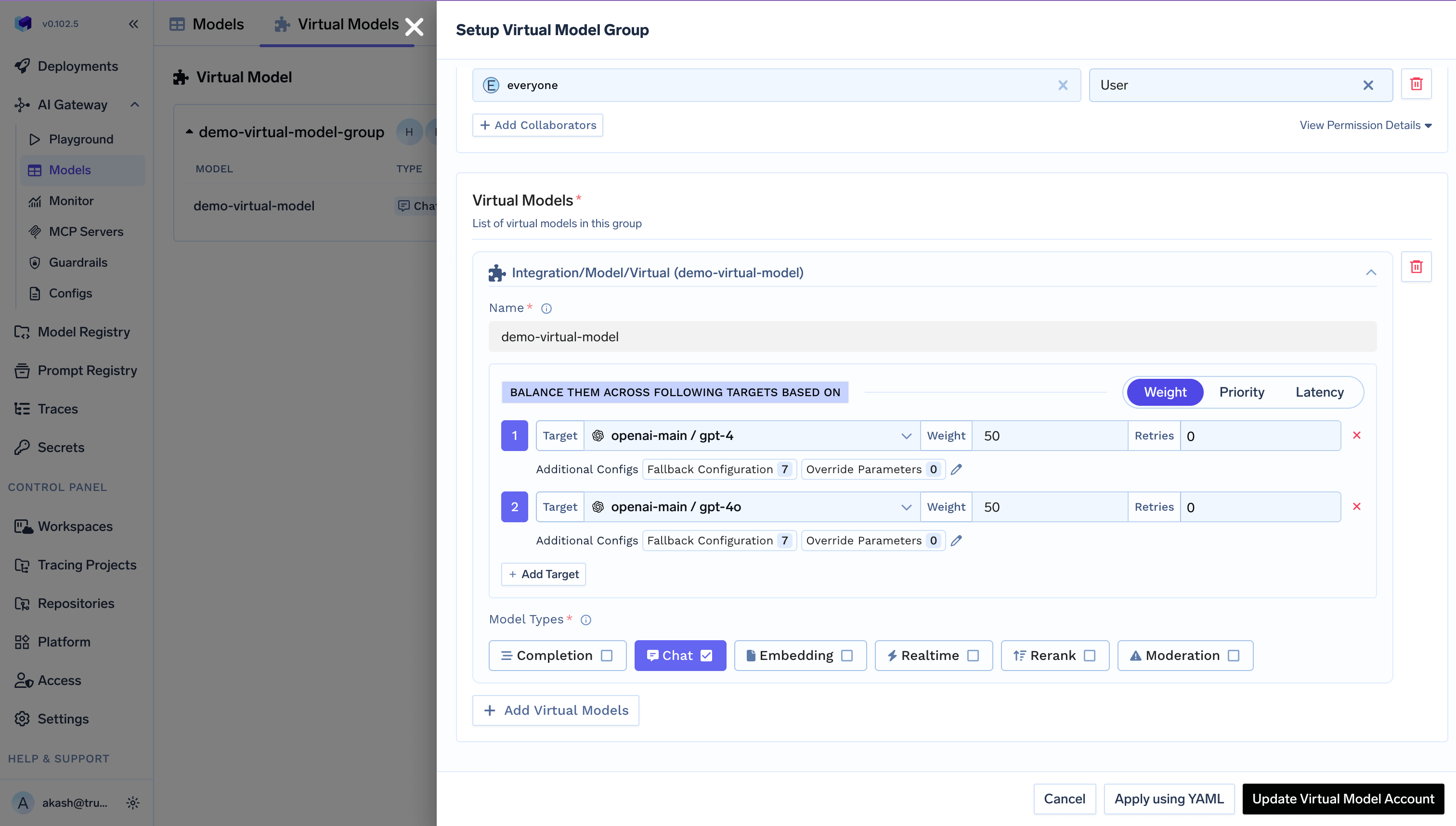

New: we now support Virtual Models

Create reusable virtual models with intelligent routing configurations to distribute requests across multiple model providers. Read more

More Updates

-

Updates on Prisma Guardrail:

- We now pass

tfy.request.conversation_idandtraceIdto all the request allowing to group messages. - We slice the payload to max size of 1.5 MB when sending to Prisma.

- We now pass

-

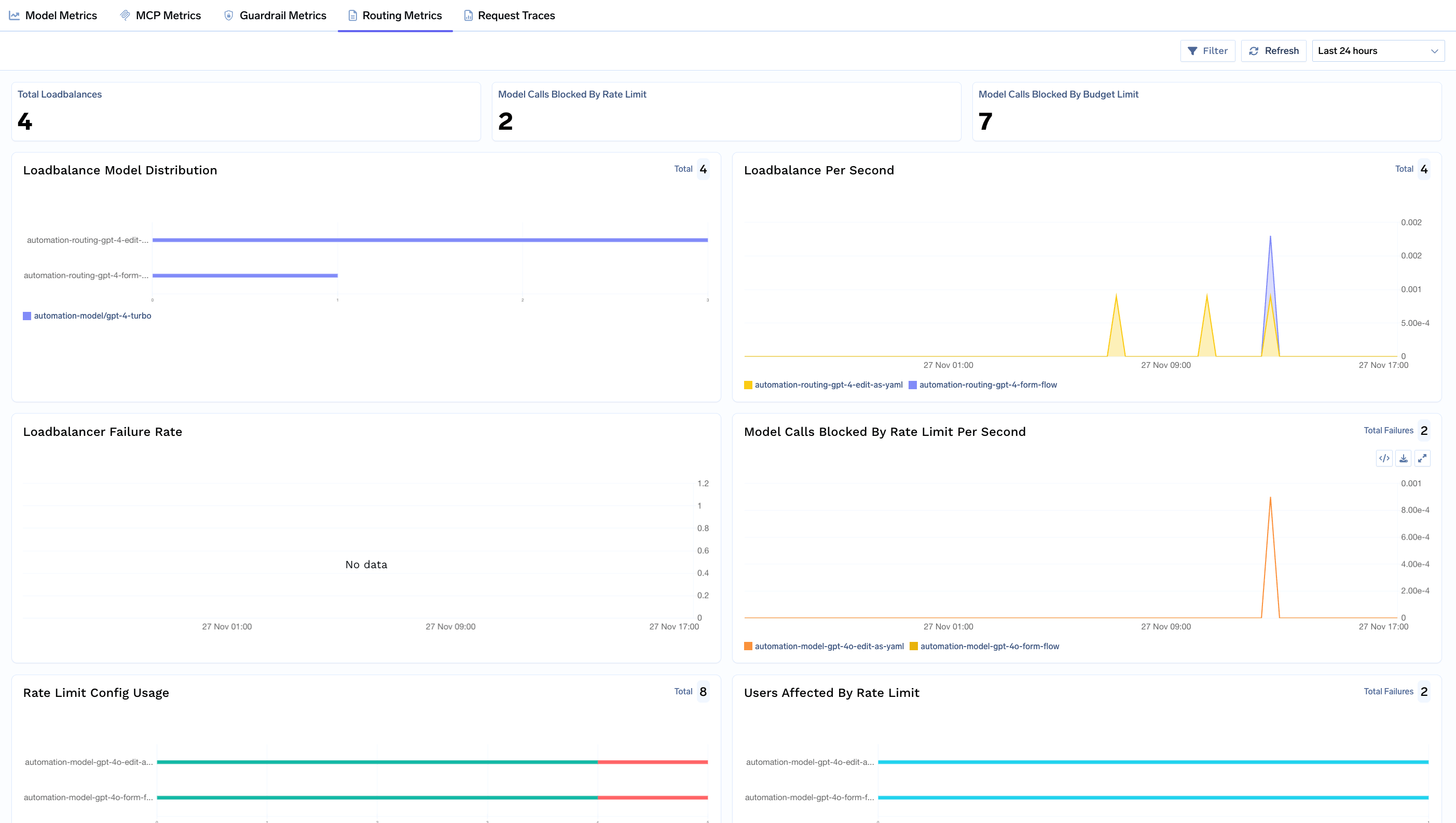

New Routing Metrics: visualize effect of different AI Gateway configs like Rate limit, Budget limit, Routing, Load Balancing or Fallback.

-

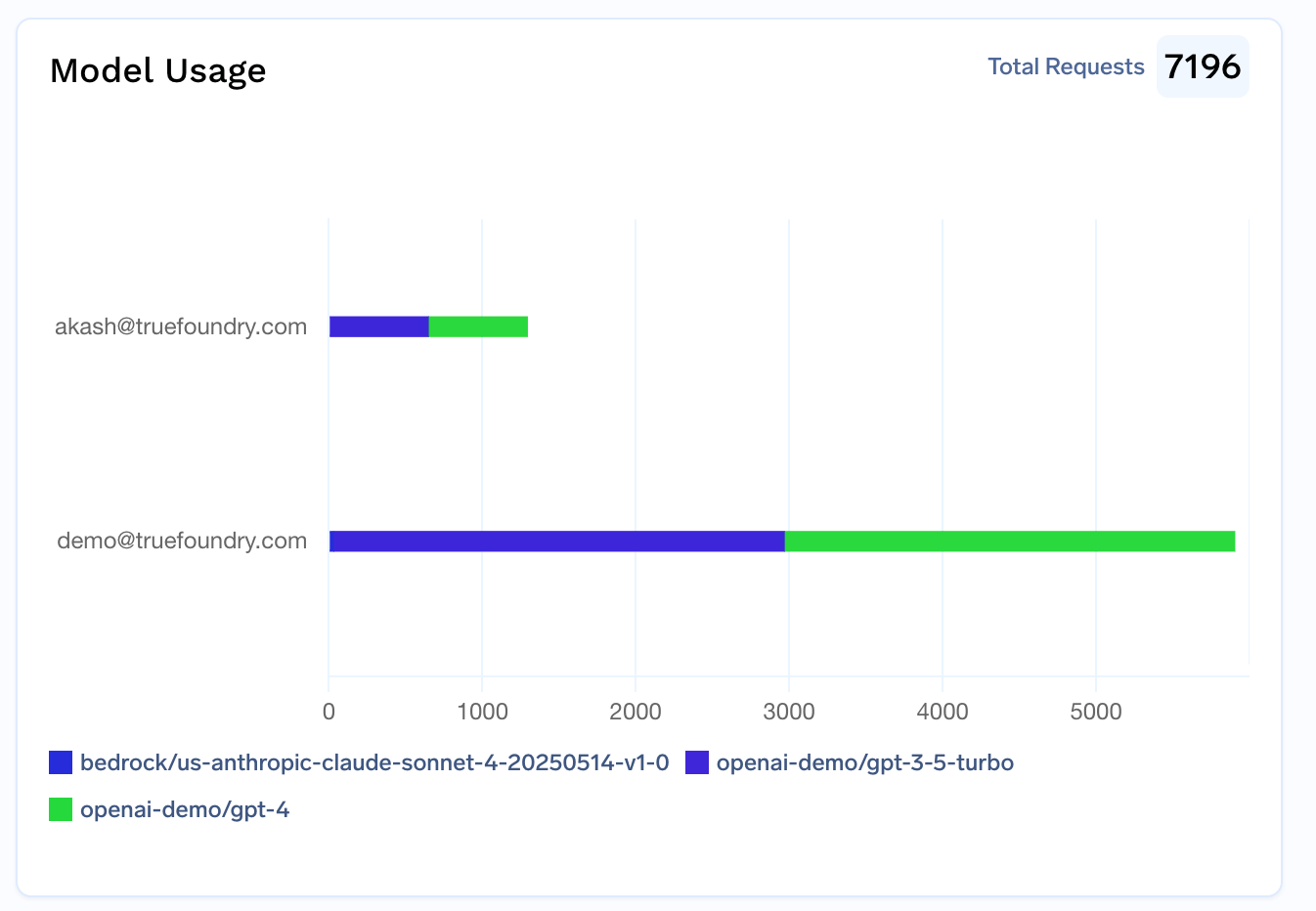

New Metrics introduced:

- Latency per output token

-

Model usage per user and per model

- Added basic tracing support for requests via Gemini CLI.

- Added support for AWS IAM role based auth in AWS SQS based async service. Read more

Release instructions

- Update

truefoundryhelm chart version to0.102.5.

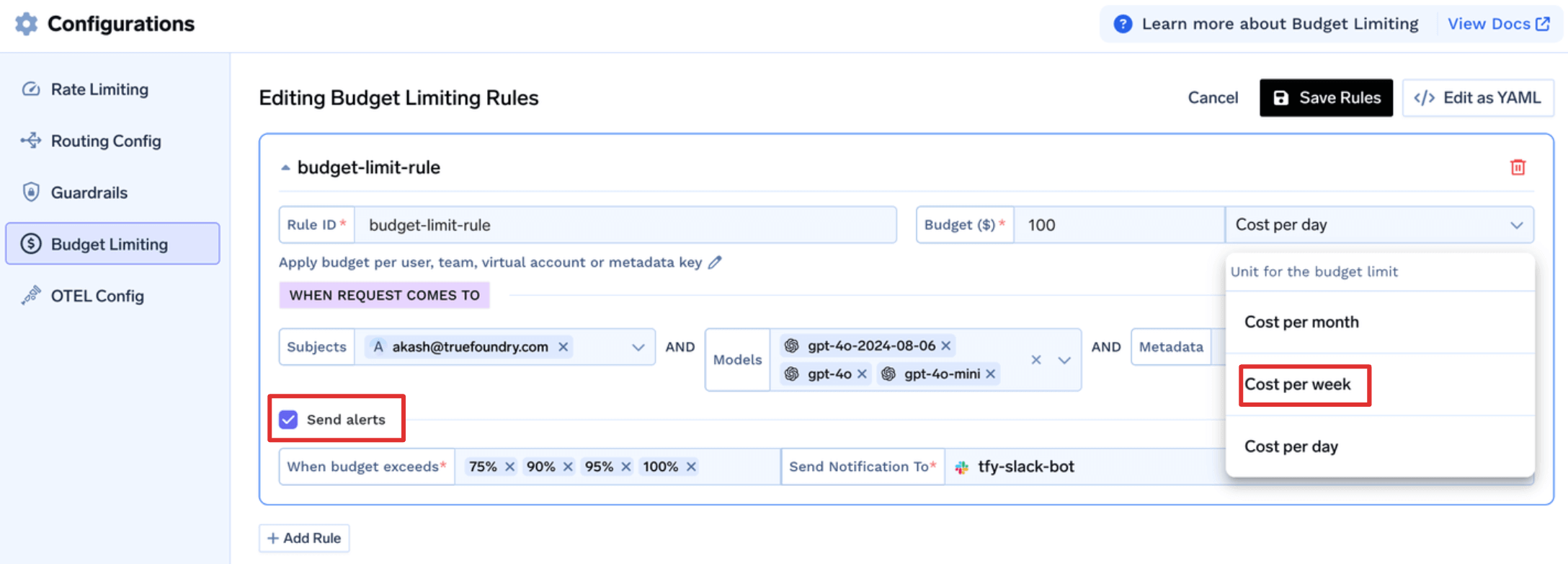

Improved Budget Limit Config

- Added support for Budget per week.

- Windows for budget usage will now be a fixed. (Day starts with 00:00 UTC, Week starts with Mon, Month starts with 1st) Read more

- Added support for setting Alerts to get warned before limit reaches. Read more

More Updates

- Behavior Change: Rate limit and Budget limit rules will now be evaluated for all matching rules and the first one in order will be applied. This enables user to set priority of rules by adjusting the order in config.

- We now support AWS IAM role based auth when adding AWS bedrock integrations in TrueFoundry SaaS Gateway. Read more

- Bug fix: fixed Patronus AI guardrail validation, fixed adding ‘Origin’ header to API request options in Promptfoo guardrail.

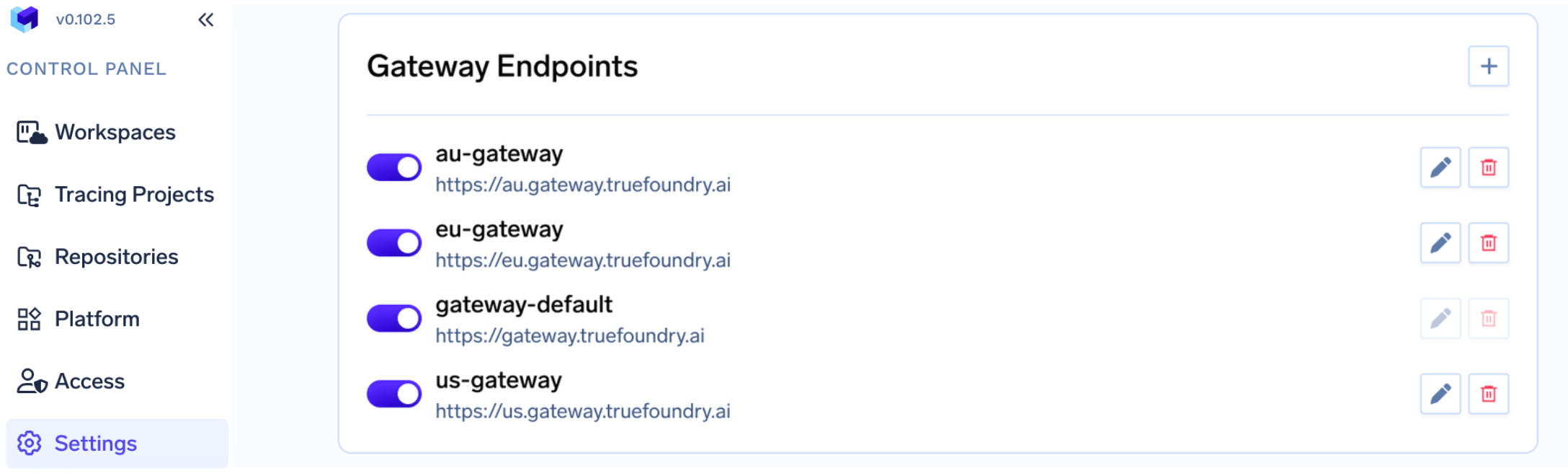

-

Added support for configuring multiple AI Gateways based on BU, region, etc. Read more

Release instructions

- Update

truefoundryhelm chart version to0.101.2.