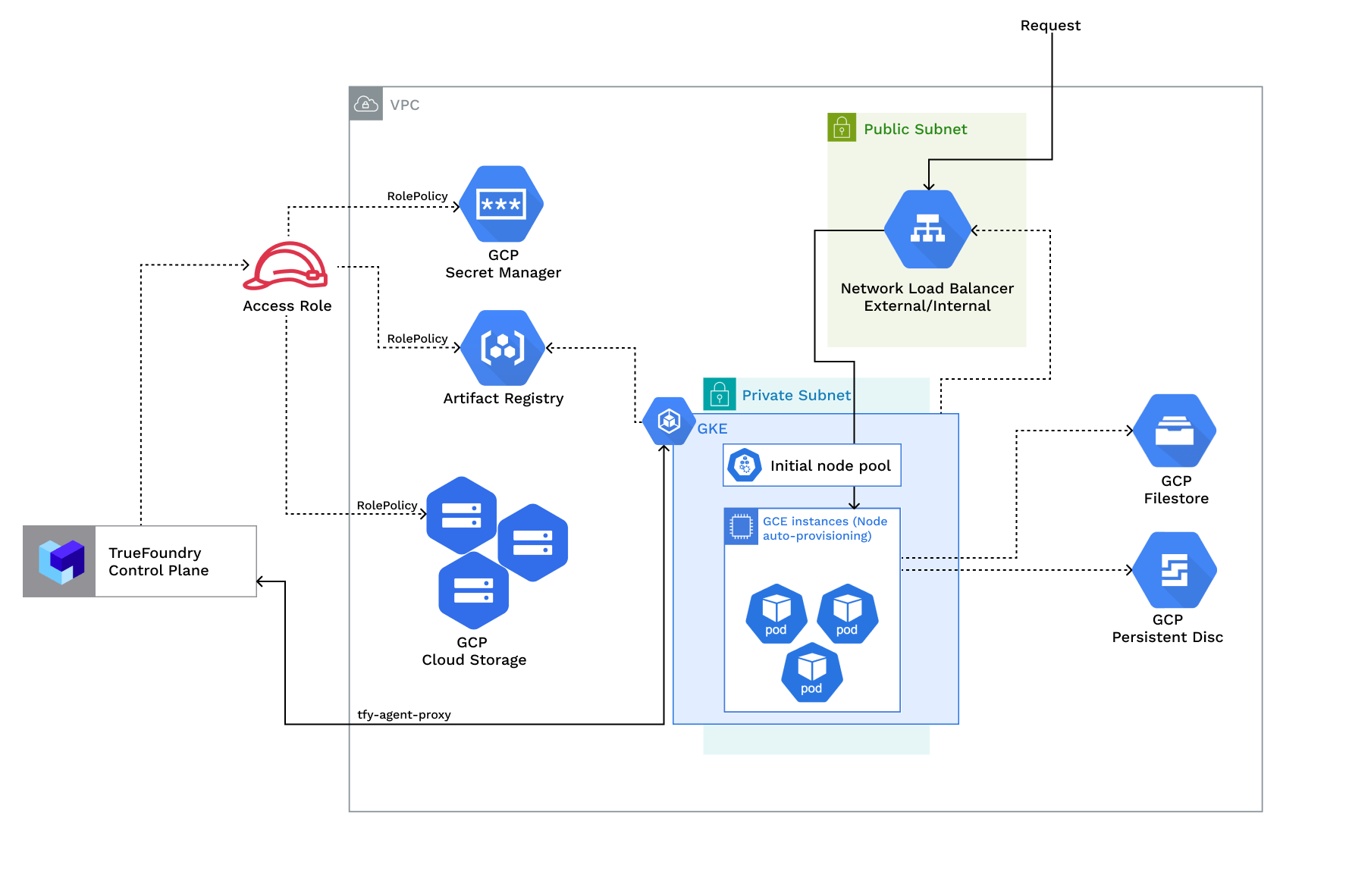

The architecture of a TrueFoundry compute plane is as follows:Documentation Index

Fetch the complete documentation index at: https://www.truefoundry.com/llms.txt

Use this file to discover all available pages before exploring further.

Access Policies Overview

Access Policies Overview

| Policy | Description |

|---|---|

| RolePolicy with policies for - Artifact registry, Secrets manager, Blob storage, Cluster viewer, IAM serviceaccount token creator, Logging viewer | Role <cluster_name>-platform-user with permissions for:- Creating and managing blob storage buckets - Managing secrets in secret manager - Pulling and pushing images to artifact registry - Enabling cloud integration for GCP (node level details) - Viewing cluster autoscaler logs - Creating Service Account keys (Service Account key creation should be allowed) |

Requirements:

The common requirements to setup compute plane in each of the scenarios is as follows:- Billing must be enabled for the GCP account.

- Following APIs must be enabled in the project -

Required APIs

- Compute Engine API - This API must be enabled for Virtual Machines

- Kubernetes Engine API - This API must be enabled for Kubernetes clusters

- Storage Engine API - This API must be enabled for GCP Blob storage - Buckets

- Artifact Registry API - This API must be enabled for docker registry and image builds

- Secrets Manager API - This API must be enabled to support Secret management

- Egress access to container registries -

public.ecr.aws,quay.io,ghcr.io,tfy.jfrog.io,docker.io/natsio,nvcr.io,registry.k8s.ioso that we can download the docker images for argocd, nats, gpu operator, argo rollouts, argo workflows, istio, keda, etc. - We need a domain to map to the service endpoints and certificate to encrypt the traffic. A wildcard domain like *.services.example.com is preferred. TrueFoundry can do path based routing like

services.example.com/tfy/*, however, many frontend applications do not support this. For certificate, check this document for more details. - Enough quotas for CPU/GPU instances must be present depending on your usecase. You can check and increase quotas at GCP compute quotas

- Service account key creation should be allowed for the service account used by the platform.

- New VPC and New GKE Cluster

- Existing VPC and New GKE Cluster

- Existing GKE Cluster

- The new VPC subnet should have a CIDR range of /24 or larger. Secondary ranges for pods (min /20) and services (min /24) are required. Secondary range can be from a non-routable range.This is to ensure capacity for ~250 instances and 4096 pods.

- User/serviceaccount to provision the infrastructure.

Setting up compute plane

TrueFoundry compute plane infrastructure is provisioned using OpenTofu/Terraform. You can download the OpenTofu/Terraform code for your exact account by filling up your account details and downloading a script that can be executed on your local machine.Enable Deployment Feature in the Platform (Optional)

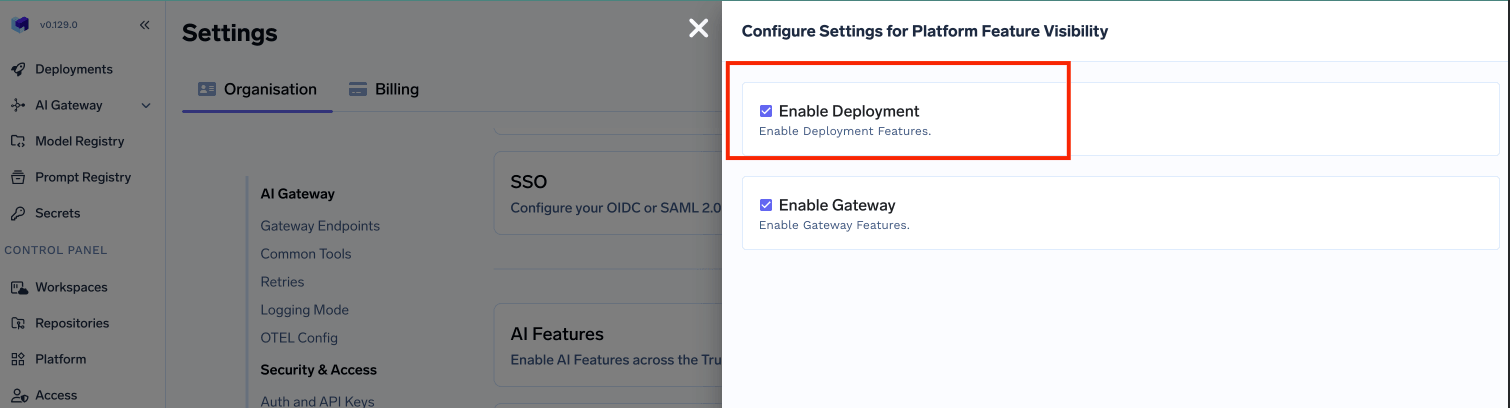

- In the left hand navigation, go to

SettingsthenPlatform Feature VisibilityunderPreferences - Click on

Editbutton. Then enable the toggle forEnable Deployment

- Click on

Savebutton.

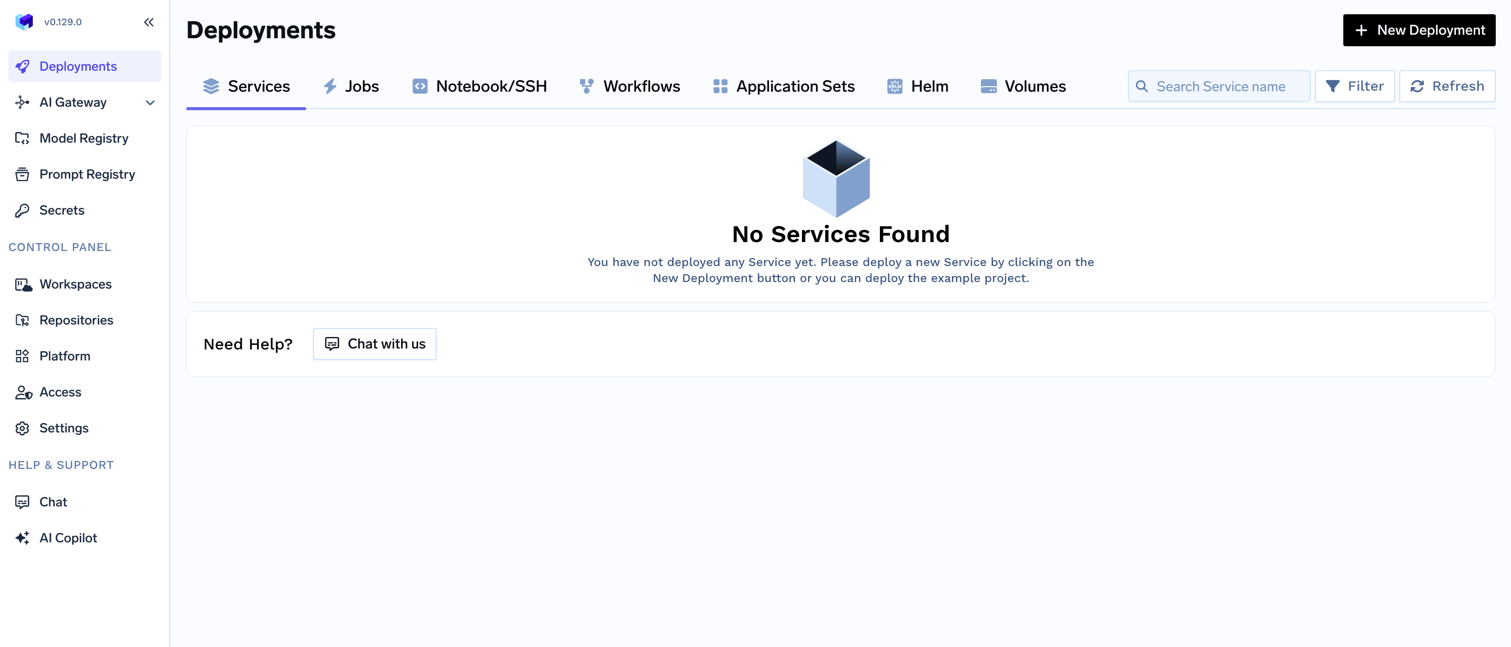

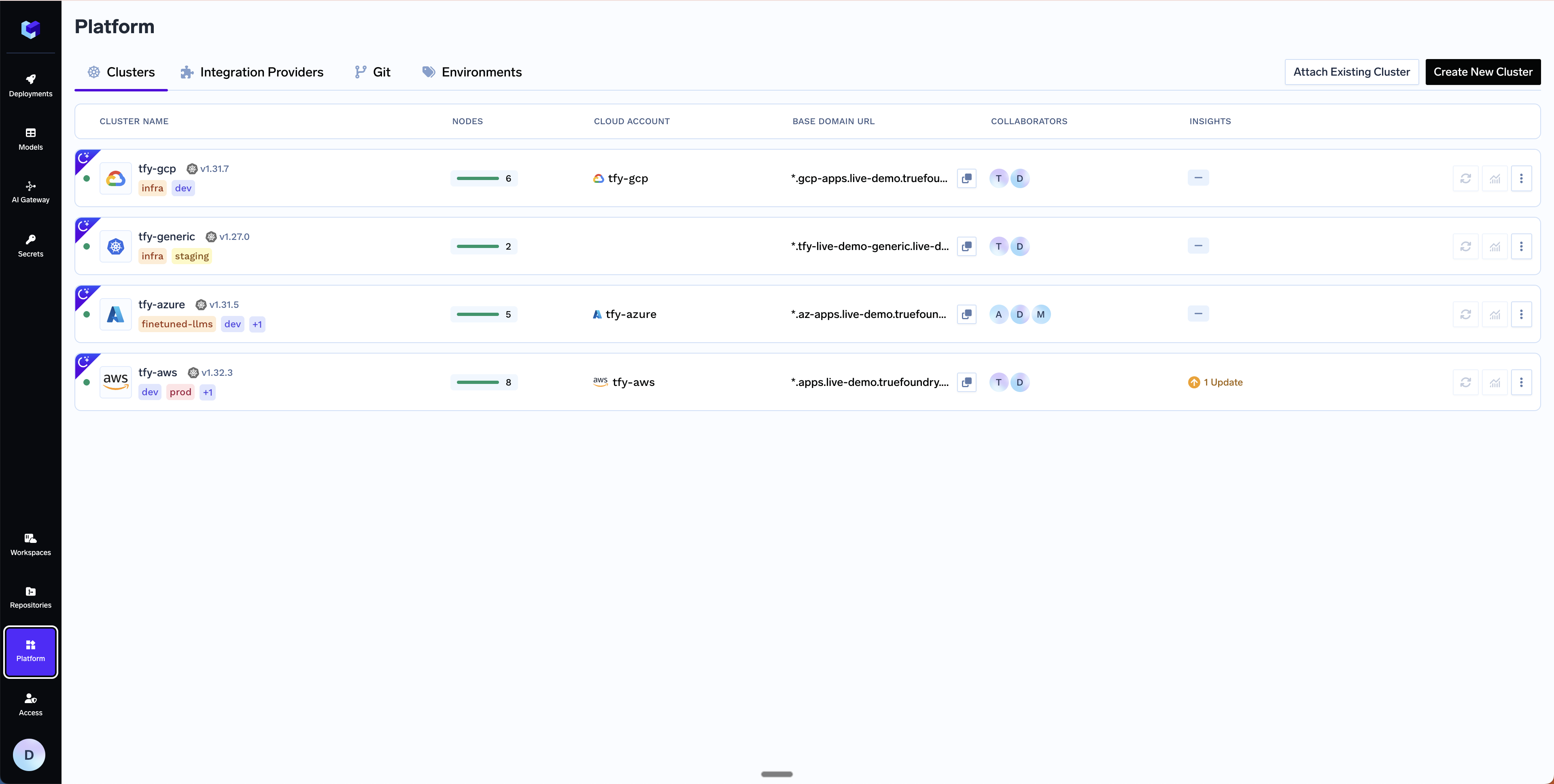

Choose to create a new cluster or attach an existing cluster

Clusters. You can click on Create New Cluster or Attach Existing Cluster depending on your use case. Read the requirements and if everything is satisfied, click on Continue.

Fill up the form to generate the OpenTofu/Terraform code

Submit when done- Create New Cluster

- Attach Existing Cluster

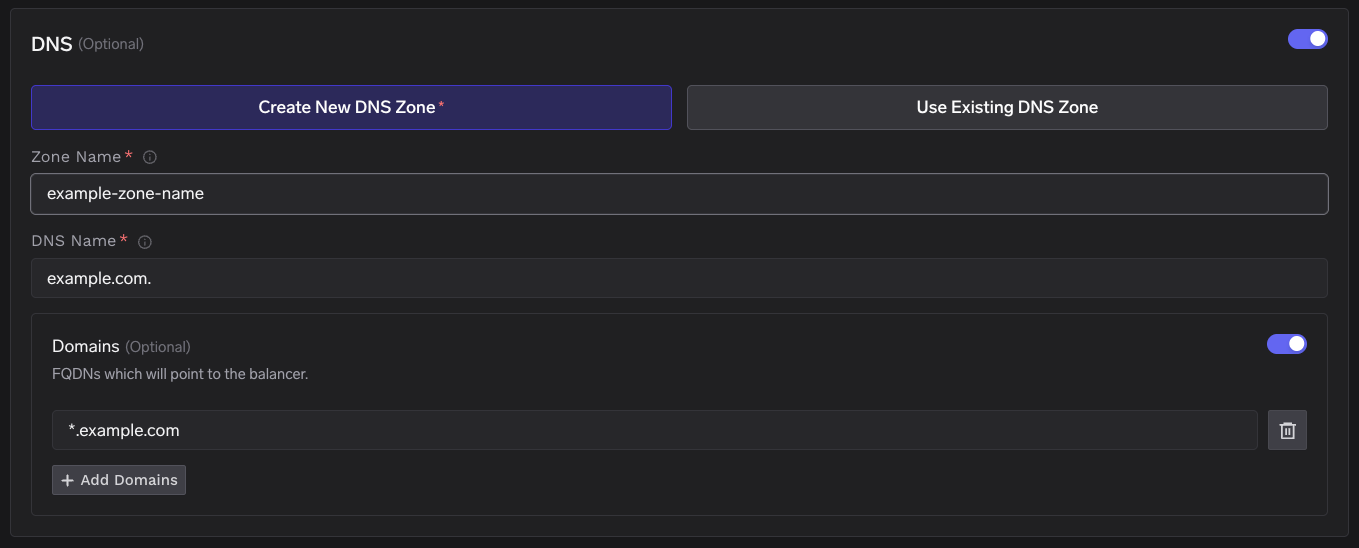

Region- The region and availability zones where you want to create the cluster.Project ID- The project ID where you want to create the cluster.Cluster Name- A name for your cluster.Cluster VersionandMaster node IPv4 block- The version of the cluster and the IPv4 block for the master nodes.Network Configuration- Choose betweenNew networkorExisting networkdepending on your use case.DNS Configuration- Configure the DNS zone and domains that will point to the cluster’s load balancer. This also provisions a TLS certificate for those domains. Select New DNS Zone or Existing DNS Zone if you want TrueFoundry to provision DNS in GCP. If you use an external DNS provider (e.g., Route53, Cloudflare), you can skip this section.

GCS Bucket for OpenTofu/Terraform State- OpenTofu/Terraform state will be stored in this bucket. It can be a preexisting bucket or a new bucket name. The new bucket will automatically be created by our script.Platform Features- This is to decide which features like BlobStorage, ClusterIntegration, Container Registry and Secrets Manager will be enabled for your cluster. To read more on how these integrations are used in the platform, please refer to the platform features page.

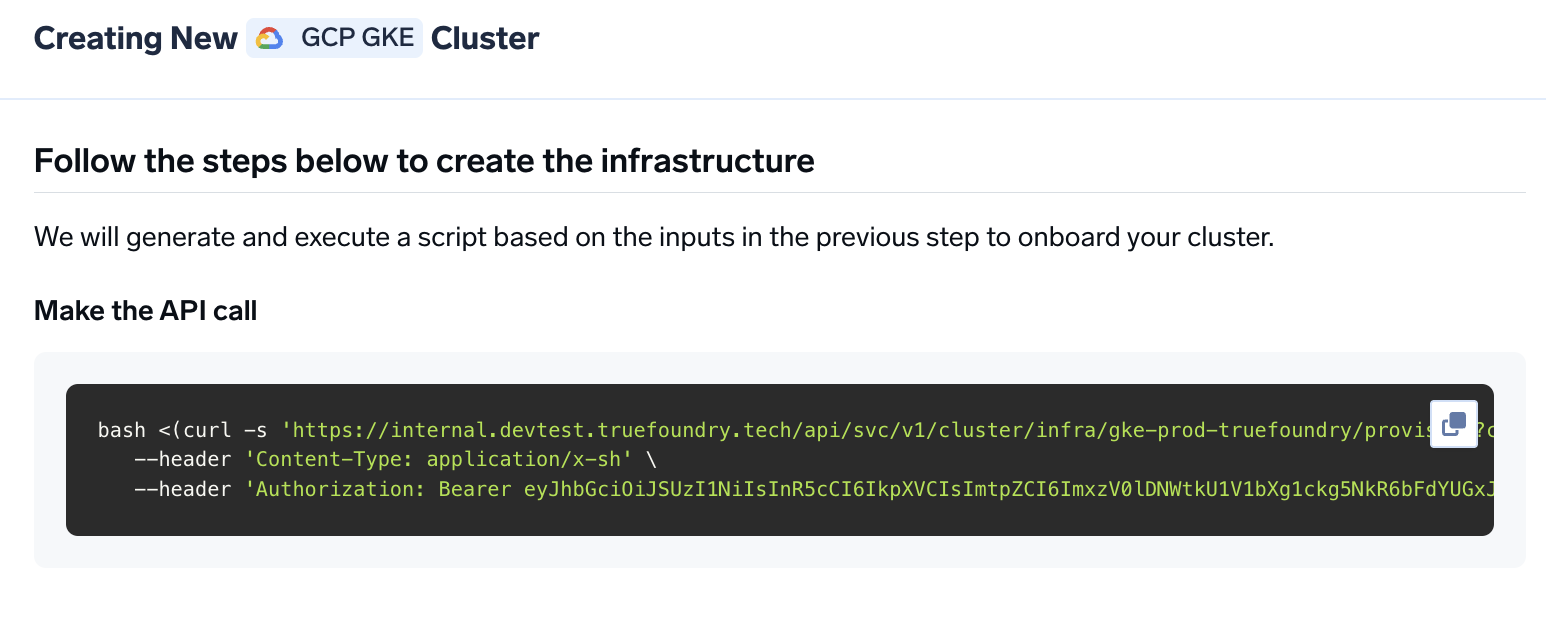

Copy the curl command and execute it on your local machine

curl command to download and execute the script. The script will take care of installing the pre-requisites, downloading OpenTofu/Terraform code and running it on your local machine to create the cluster. This will take around 40-50 minutes to complete.

Verify the cluster is showing as connected in the platform

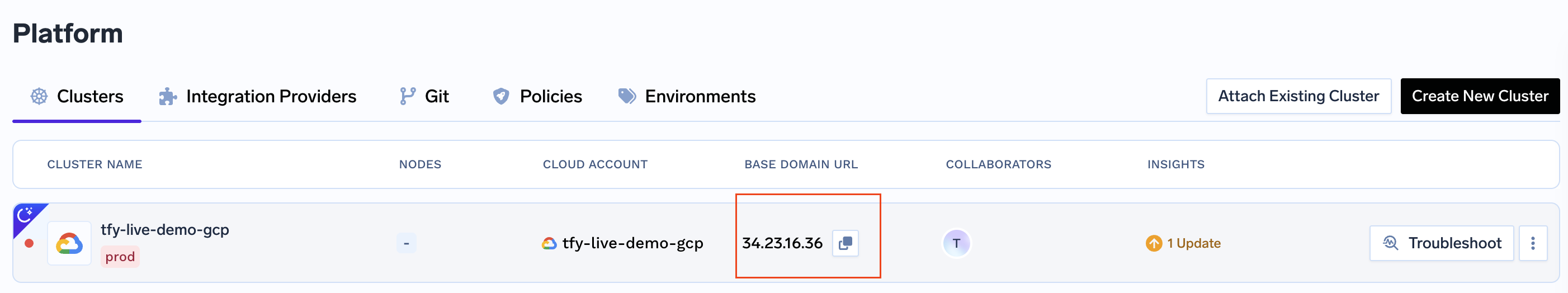

Create DNS Record

Base Domain URL section.

| Record Type | Record Name | Record value |

|---|---|---|

| A. | *.tfy.example.com | LOADBALANCER_IP_ADDRESS |

Start deploying workloads to your cluster

FAQ

Can I use my own certificate and key files to add TLS to the load balancer?

Can I use my own certificate and key files to add TLS to the load balancer?

-

Create a Kubernetes secret with your certificate and key, or create a self-signed certificate:

-

Once the secret is created, head over to the cluster page and navigate to the

tfy-istio-ingressadd-on. Add the secret name in thetfyGateway.spec.servers[1].tls.credentialNamesection and ensure thattfyGateway.spec.servers[1].port.protocolis set toHTTPS. Here we are usingexample-com-tlsas the secret name, which contains the certificate and key.